The AI Arms Race Heats Up: Llama 4, Gemini 2.5, GR00T Robots, and the 100× Energy Breakthrough

April 2026 is already the most consequential month in AI history — Meta's 10M-token Llama 4, Google's 1M-token Gemini 2.5 Pro, NVIDIA's humanoid robot foundation models, and a neuro-symbolic breakthrough that cuts AI energy use 100× are reshaping the landscape.

Table of Contents

If you thought 2025 was a packed year for AI releases, April 2026 has already blown the doors off. In the span of two weeks, Meta, Google, Anthropic, OpenAI, and NVIDIA all shipped major updates — while researchers quietly published results that could rewrite how we think about model efficiency and energy cost. Here’s what’s happening, why it matters, and what to watch next.

Meta’s Llama 4: 10 Million Tokens and Mixture-of-Experts Go Open Source

On April 5, Meta released the Llama 4 family — and the headline number is almost absurd: a 10 million token context window. For reference, that’s roughly 7,500 pages of text or 2.5 million lines of code processed in a single prompt. The previous Llama 3 ceiling was 128K tokens.

Llama 4 comes in two efficient variants built around a Mixture-of-Experts (MoE) architecture:

- Llama 4 Scout — 17B active parameters, 16 experts, 109B total parameters, 10M context window

- Llama 4 Maverick — 17B active parameters, 128 experts, designed for denser, more complex reasoning tasks

The MoE trick is important: while the model has 109B total parameters, only 17B are activated per token. This means you get near-dense-model quality at a fraction of the inference compute cost. A concurrent Google Brain paper confirms that well-trained sparse MoE architectures can match dense model quality at 3× lower inference FLOPs per token — and Llama 4 is the largest public proof of concept for this principle.

Both models are natively multimodal (the first open-weight models to be so) and are available on Hugging Face and llama.com. The open-source implications are enormous: fine-tunable 10M-context multimodal models are now accessible to any researcher or developer.

Google Gemini 2.5 Pro: 1M Tokens and Deep Multimodal Reasoning

Google kicked off the month on April 1 with Gemini 2.5 Pro, its most capable reasoning model yet. The model pairs a 1 million token context window with native processing of text, images, audio, video, and entire code repositories in a single prompt.

What sets Gemini 2.5 Pro apart from earlier Gemini versions is the thinking capability — the model reasons through intermediate steps before delivering a final answer, similar in spirit to chain-of-thought prompting but baked into the model’s inference loop. This results in measurably better accuracy on complex multi-step tasks.

On the coding front, Google has rolled out an updated Gemini 2.5 Pro with enhanced code understanding: it more intuitively parses ambiguous prompts, and its Canvas integration can scaffold compelling web apps directly from natural language descriptions.

Pricing currently sits at $1.00 per million input tokens and $10.00 per million output tokens — a sign of just how far inference costs have fallen compared to even 18 months ago.

Anthropic’s Claude 4 Opus: The Coding Frontier

Anthropic’s Claude 4 family — released April 2 — features Claude Opus 4 as its flagship, tuned specifically for extended autonomous coding sessions. It supports a 200K token context window and posted a 72.1% verified score on SWE-bench, the gold standard for evaluating AI ability to resolve real GitHub issues. That’s the highest published score on the benchmark to date.

Anthropic researchers also published new mechanistic interpretability findings this month: the discovery of “universal attention head circuits” — specific attention patterns that appear consistently across multiple model families and handle distinct reasoning sub-tasks. This kind of structural regularity is a significant step toward model editing and surgical fine-tuning, where you can modify a specific reasoning behavior without degrading others.

NVIDIA’s GR00T N2: Robots That Learn from World Models

NVIDIA’s National Robotics Week this April was more than symbolic — it came with a wave of new open physical AI models. The centerpiece is GR00T N2, a next-generation humanoid robot foundation model based on the DreamZero research framework. NVIDIA reports that GR00T N2 helps robots succeed at new tasks in new environments more than twice as often as leading vision-language-action (VLA) models.

The key insight behind GR00T N2 is training on world models that capture physics and causality rather than raw video demonstrations. Robots trained this way need dramatically less real-world data to generalize to unseen environments — a major practical breakthrough for deployment at scale.

NVIDIA is also integrating Isaac GR00T and Isaac Lab into Hugging Face’s LeRobot framework, making it possible for open-source developers to fine-tune and evaluate humanoid robot policies using the same toolchain they already know. GR00T N1.7 is available in early access with commercial licensing, while GR00T N2 is being rolled out to research partners.

Separately, NVIDIA announced Ising on April 14 — the world’s first open AI models designed to accelerate the path to useful quantum computers, targeting combinatorial optimization problems that classical hardware struggles with.

The 100× Energy Breakthrough: Neuro-Symbolic AI Gets Real

Perhaps the most consequential research result this month came from a team combining neural networks with symbolic reasoning. Their work, which landed on ScienceDaily and was presented at ICLR 2026, describes an approach that reduces AI energy use by up to 100× while actually improving accuracy.

The mechanism is elegant: instead of letting a neural network trial-and-error its way through every subtask, neuro-symbolic VLAs (vision-language-action models) inject explicit rules that constrain the search space during learning. The model learns faster, trains in far less time, and requires orders of magnitude less compute for certain classes of tasks.

This is significant beyond the headline number. The AI industry’s energy appetite has become a political and infrastructure issue — Microsoft just announced a $10 billion investment in Japan’s AI infrastructure, and data center power demand continues to strain grids globally. A principled 100× efficiency gain, even if limited to specific task domains initially, points toward a different scaling trajectory.

Complementing this, Google unveiled TurboQuant at ICLR 2026 — an algorithm that significantly reduces the KV cache memory overhead in transformer inference using two steps: PolarQuant (vector rotation) followed by Quantized Johnson-Lindenstrauss compression. Less memory per inference token means more requests served per GPU, and lower latency at scale.

Climate and Science AI: 90-Day Drought Prediction and 100-Year Climate Simulation in 25 Hours

Two applied science breakthroughs are worth flagging for their real-world stakes.

The US Geological Survey unveiled an AI system capable of predicting drought conditions with unprecedented accuracy up to 90 days in advance. Drought early warning at this horizon — three times farther out than most operational systems — gives farmers, water managers, and policymakers a meaningful planning window.

Meanwhile, Spherical DYffusion, developed by UC San Diego and the Allen Institute for AI, can project 100 years of climate patterns in just 25 hours — 25× faster than current numerical methods. Climate ensemble runs that previously required weeks of supercomputing time can now be iterated rapidly, enabling far more thorough scenario analysis for IPCC-style assessments.

What to Watch

The open/closed frontier gap is narrowing fast. Llama 4 Scout and Maverick with 10M-token multimodal context are competitive with models that cost hundreds of millions to develop just two years ago. As inference costs continue to fall and open weights proliferate, expect the next wave of AI value to shift from raw model capability to product integration, fine-tuning expertise, and domain-specific deployment.

On the efficiency front, neuro-symbolic hybrids and MoE routing algorithms are both pointing toward the same conclusion: brute-force scaling is not the only path. The next 6–12 months will likely see a wave of “smaller but smarter” models that challenge the assumption that frontier performance requires frontier compute.

For robotics, the integration of world models, physics-aware simulation (Isaac Sim 6.0, Isaac Lab 3.0), and open fine-tuning toolchains (LeRobot) is setting the stage for a Cambrian explosion in physical AI applications — not just in humanoids, but in manufacturing, logistics, and agricultural automation.

Related Reading

- Vision Learns to Think, Codex Goes Everywhere, and Open Weights Claim the Coding Crown

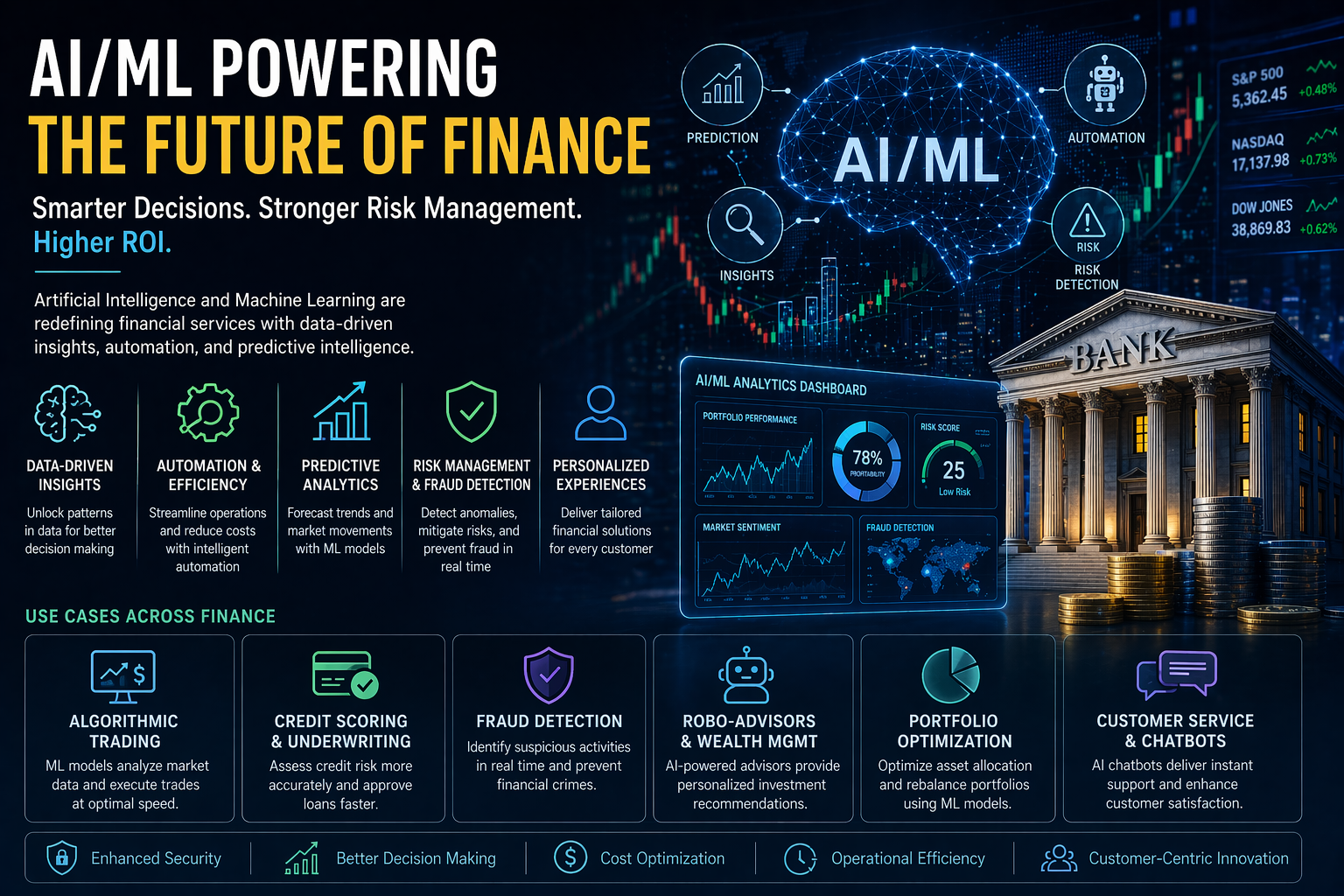

- Wall Street’s AI Arms Race: Agentic Finance, Foundation Models for Fraud, and 5,000 Layoffs — All at Once

- AI’s Trust Test: Surgical Robots, Broken Benchmarks, and the EU’s 100-Day Countdown

Sources

- Meta Llama 4: The beginning of a new era of natively multimodal AI

- Llama 4 Scout — 10M Token Context, Specs & Local Deployment

- Gemini 2.5 Pro on Vertex AI — Google Cloud Documentation

- AI breakthrough cuts energy use by 100× while boosting accuracy — ScienceDaily

- NVIDIA Releases New Physical AI Models as Global Partners Unveil Next-Generation Robots

- NVIDIA National Robotics Week 2026 — Latest Physical AI Research

- NVIDIA Launches Ising — World’s First Open AI Models for Quantum Optimization

- Best AI News April 2026: 5 Game-Changing Developments Ranked — Relvai

- Latest LLM Releases in April 2026 — Fazm Blog

- Gemini Models Explained: The Complete 2026 Guide — TeamAI

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.