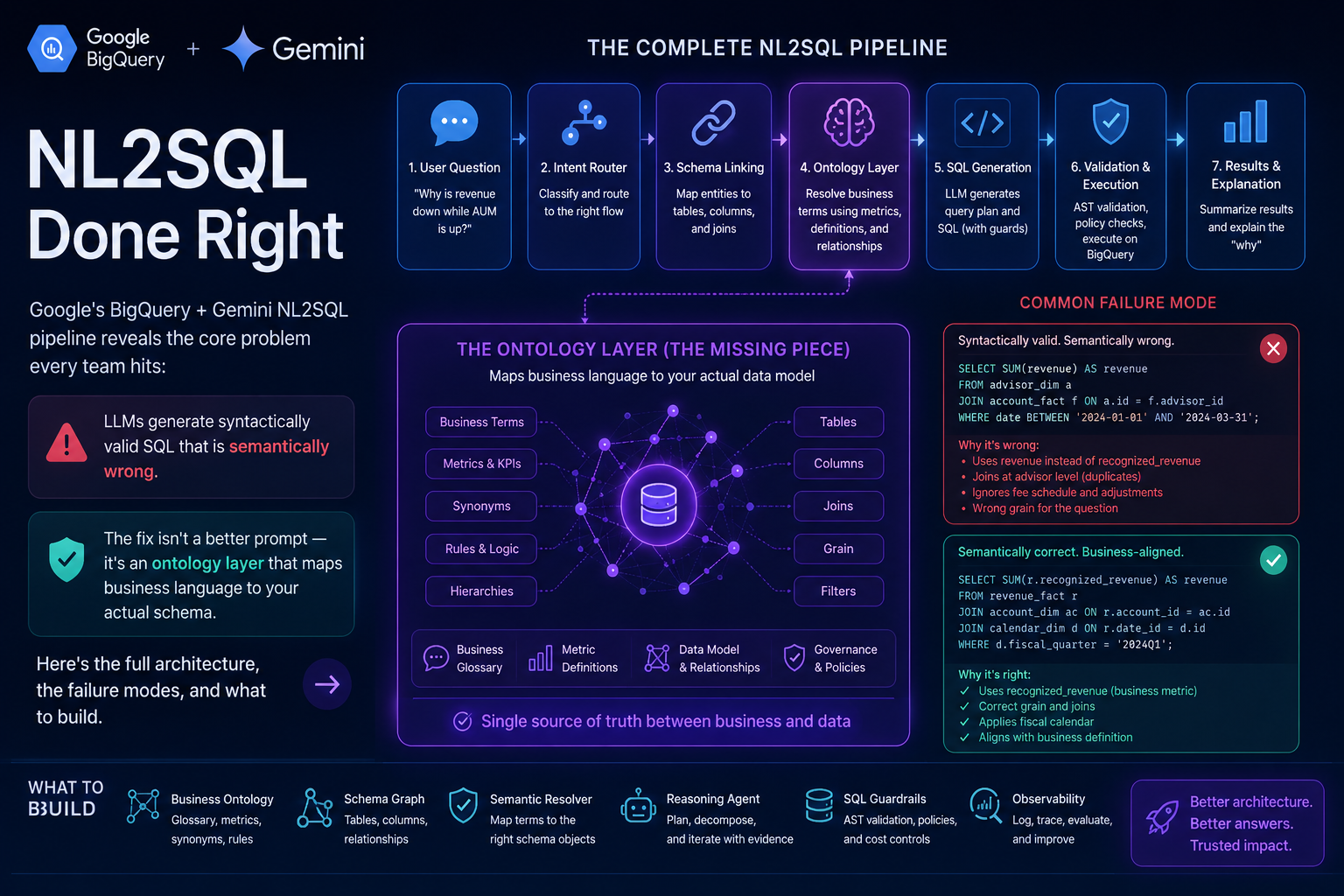

The NL-2-SQL Agent Trap: Why LLMs Need an Ontology Layer to Stop Hallucinating Your Database

Google's BigQuery + Gemini NL2SQL pipeline reveals the core problem every team hits: LLMs generate syntactically valid SQL that is semantically wrong. The fix isn't a better prompt — it's an ontology layer that maps business language to your actual schema. Here's the full architecture, the failure modes, and what to build.

Table of Contents

There is a class of AI failure that is uniquely insidious because it looks exactly like success.

A business analyst asks: “What was our revenue from enterprise customers last quarter?” An NL-to-SQL agent processes the question, writes a SQL query, executes it against the data warehouse, and returns a number. The number is formatted correctly. It ran without errors. The pipeline logs show a green checkmark. And the number is completely wrong — because the agent joined on the customers table but filtered by account_type = 'enterprise' instead of the correct segment_tier = 'ENT', pulled from gross_revenue instead of net_revenue_after_returns, and used the calendar quarter instead of the fiscal quarter your company runs on.

This is the NL-2-SQL agent trap. And it is not a problem you solve by writing a better prompt.

Google’s recent work on NL2SQL with BigQuery and Gemini is one of the clearest public articulations of what it actually takes to make natural language to SQL work reliably in enterprise data environments. The short version: you need an ontology layer — a semantic map that connects the language users speak to the schema your data actually lives in — before any of the LLM magic becomes trustworthy. Without it, you are essentially asking the model to guess your business logic from column names, and column names are one of the worst-named things in software engineering.

This post breaks down why that’s true, what an ontology layer actually is in this context, how Google’s architecture addresses the problem, and what the remaining failure modes look like even when you do it right.

Why LLMs Are Dangerous at SQL Generation (But Not for the Reason People Think)

The common assumption is that LLMs are bad at SQL because they make syntax errors or write queries for the wrong database dialect. This is almost entirely wrong. Modern LLMs are excellent at SQL syntax. Given a schema dump, they can write structurally valid queries for BigQuery, Postgres, Snowflake, or DuckDB with very high reliability. They understand CTEs, window functions, complex aggregations, nested subqueries. The syntax problem is largely solved.

The problem is semantic. And it has three roots.

First: LLMs don’t know what your business means by its words. “Revenue” means something specific at your company — it might be sum(line_item_amount) with a particular join to exclude returns, credits, and intra-company transactions. The model doesn’t know that. It will write what looks reasonable based on column name similarity, which is often wrong. “Customer” might mean accounts, end_users, organizations, or legal_entities depending on which team built which table, which product line you’re reporting on, and what quarter the schema was designed in.

Second: Enterprise schemas are enormous and inconsistently named. A mid-size company’s data warehouse easily has 300–1,000+ tables, many with overlapping semantic territory built by different teams at different times. A question about “sales performance” could plausibly draw from salesforce_opportunities, revenue_recognition_events, deal_pipeline_v2, closed_won_historical, and quarterly_targets — and the correct answer uses exactly two of those five, joined in a specific way, with a filter that isn’t derivable from the schema alone. The model, without grounding, will pick the most name-similar tables and write a query that runs but measures the wrong thing.

Third: Schema metadata is not the same as semantic metadata. A schema tells you that a column called acv exists in the contracts table and is of type FLOAT64. It does not tell you that ACV means Annual Contract Value, that it’s net of discounts but gross of professional services, that it should never be aggregated directly because it’s a point-in-time snapshot, and that the correct metric for revenue reporting is normalized_arr in the finance_metrics table instead. That knowledge lives in the heads of your data team, not in the database.

These three properties combine to create a failure mode that is almost impossible to catch automatically: the query runs, returns a plausible-looking number, and is wrong in a way that requires domain expertise to detect.

What an Ontology Layer Actually Is (And Isn’t)

In the context of NL-to-SQL, “ontology” gets used loosely. It’s worth being precise about what it means and what forms it can take.

In the strictest sense, an ontology is a formal specification of concepts, relationships, and constraints in a domain — the kind of thing you’d express in OWL/RDF, with class hierarchies, property restrictions, and inference rules. Academic NL2SQL systems sometimes use this form. In practice, enterprise NL2SQL teams almost never do, because maintaining a formal OWL ontology for a live data warehouse is a significant ongoing engineering investment that most organizations cannot sustain.

What actually works in production is a semantic layer — a structured mapping between business concepts and schema entities, maintained by the data team, queried at inference time by the NL2SQL agent. This semantic layer can take several forms along a spectrum of formality:

A business glossary at the lightweight end maps terms to definitions and canonical column references. “Revenue” → “sum of net_revenue from finance_revenue_events table, filtered to exclude transaction_type IN ('credit', 'reversal', 'internal')”. Simple, maintainable, powerful.

A metric catalog extends this to pre-defined aggregations: named metrics with their precise SQL definitions, dimensions they can be sliced by, and grain at which they’re defined. This is what tools like dbt metrics, Looker’s LookML, and Google’s Semantic Models formalize.

A full semantic ontology adds concept hierarchies, cross-concept relationships, and inference rules — “Enterprise Customer is a subclass of Customer, where segment_tier = ‘ENT’ in the accounts table” — which allows the agent to reason about whether a question about “enterprise customers” should use a filter, a join, or a subquery depending on how the schema is structured.

Google’s BigQuery + Gemini approach operates primarily at the metric catalog / semantic layer level, with Looker’s semantic modeling providing much of the ontological grounding. The key insight is that you don’t need the full formal machinery to get most of the benefit — a well-maintained business glossary plus a metric catalog covers the overwhelming majority of enterprise reporting queries.

The Five Failure Modes of Naive NL-to-SQL

Before going into the architecture, it’s worth naming the specific failure modes that an ontology layer is designed to address. Each one has a different mechanism and a different mitigation.

Schema explosion. When the full schema (300+ tables, 10,000+ columns) is included in the prompt, two things happen: the context window fills up and performance degrades, and the model performs poorly at identifying the relevant subset of tables for the query. Naive implementations try to squeeze the whole schema into the prompt and get inconsistent results. The ontology layer addresses this through semantic retrieval — embedding the question and doing similarity search over ontology nodes to surface only the relevant tables, columns, and metrics before prompting.

Term ambiguity. When a business term maps to multiple possible schema interpretations, the model picks the most syntactically plausible one, which is often not the semantically correct one. The ontology layer addresses this by explicitly disambiguating: the business glossary has one canonical definition per term, and the agent uses that definition rather than inferring from column names.

Aggregation mismatch. Metrics like revenue, churn rate, or customer lifetime value have specific aggregation logic that’s not inferrable from the schema. The model might write SUM(revenue) when the correct answer requires SUM(DISTINCT revenue) / COUNT(DISTINCT month) with specific fiscal calendar adjustments. The metric catalog addresses this by providing pre-defined aggregation SQL for named metrics, which the agent uses verbatim rather than generating.

Join hallucination. When the model needs to join tables, it often guesses join conditions based on column name similarity — joining on customer_id columns that happen to have the same name but actually reference different entity types in your schema. The ontology layer addresses this by encoding the correct join relationships explicitly, so the agent can look up “how do I join contracts to accounts?” and get the right answer.

Dialect drift. Even when the model knows it’s generating BigQuery SQL, subtle dialect differences — ARRAY_AGG vs STRING_AGG, DATE_DIFF argument order, QUALIFY clause availability — cause failures that are hard to reproduce and debug. The ontology layer can address this by encoding dialect-specific SQL patterns for common operations, which the agent retrieves and uses rather than generating from scratch.

How Google’s BigQuery + Gemini Architecture Addresses This

Google’s NL2SQL approach with BigQuery and Gemini is not a single model doing end-to-end translation. It’s a multi-step agent pipeline with explicit semantic grounding at each stage. The core components are:

Schema and semantic metadata extraction. Before any query is processed, the pipeline builds a semantic index over the BigQuery schema combined with Looker’s semantic model (LookML). This means columns aren’t just represented by their name and type — they’re represented by their business description, the metrics they participate in, the joins they enable, and the business concepts they encode. This is the ontology layer: a structured, queryable representation of what the data means, not just what it contains.

Semantic schema retrieval. When a user submits a question, the agent embeds the question and does similarity search over the semantic index to retrieve the most relevant tables, columns, metrics, and join paths. This is retrieval-augmented generation applied to schema — the model only sees the schema elements that are semantically relevant to the question, not the entire warehouse. This addresses schema explosion and improves the signal-to-noise ratio of the prompt.

Chain-of-thought SQL generation. With the retrieved semantic context injected into the prompt, Gemini generates SQL using a chain-of-thought approach: first reasoning about which entities the question involves, which metrics are requested, what filters apply, and what the correct join path is — then writing the SQL that implements that plan. This intermediate reasoning step catches many of the semantic errors that direct generation misses.

SQL validation and self-correction. The generated SQL is validated before execution — both syntactically (parse it, check BigQuery compatibility) and semantically (verify that the tables and columns used actually match the semantic context that was retrieved). If validation fails, the agent enters a self-correction loop: it takes the error, the original question, the semantic context, and the failed SQL, and generates a corrected version. Google’s pipeline supports multiple rounds of self-correction before falling back to a human-readable error.

Execution and result interpretation. After BigQuery executes the query, the result is optionally passed back through Gemini for natural language interpretation — translating a table of numbers into a prose explanation, flagging potential data quality issues, or suggesting follow-up questions. This last step is optional but significantly improves the user experience for non-technical analysts.

The Role of Looker’s Semantic Model

One piece of Google’s architecture that often gets underemphasized is Looker’s LookML as the ontological foundation. LookML is essentially a business-logic definition language for BigQuery schemas — it defines dimensions, measures, join relationships, and drill paths in a way that maps business concepts to SQL expressions. When Google’s NL2SQL pipeline uses Looker’s semantic model, it gets a pre-built, business-team-maintained ontology for free.

This is significant for two reasons. First, it means the ontology is owned by the data team, not the ML team — the people who know what “revenue” means maintain the canonical definition, and the NL2SQL agent consumes it. This is the right ownership model. ML teams are good at building agents; they’re not good at knowing that fiscal Q4 ends February 28th for this particular company.

Second, it provides a natural integration point with existing governance workflows. If your company already has a Looker semantic layer, you have the raw material for an NL2SQL ontology without building from scratch. The incremental investment is in the retrieval pipeline and the prompt engineering, not in the semantic modeling itself.

For teams without Looker, the equivalent is a dbt project with well-defined metrics and thorough documentation, or a standalone business glossary maintained in something like DataHub, Atlan, or Alation. The format matters less than the practice: having a team-maintained, structured mapping between business language and schema that the NL2SQL agent can query at inference time.

Building the Ontology for Your NL2SQL Agent: What to Include

If you’re building this from scratch, the minimum viable ontology for an NL2SQL agent has four components.

Business term definitions. A mapping from natural language terms to canonical SQL expressions. Every ambiguous term your users are likely to ask about should have an entry: what table it lives in, what column or expression computes it, what filters apply, and what it does NOT include (negative definitions are often more important than positive ones — “revenue excludes internal transfers” is critical, not obvious).

Metric definitions. Pre-defined SQL aggregations for named metrics, with grain specification and allowed dimensions. “Monthly Active Users” → specific SQL, grain = day, dimensions = [region, product_line, user_segment]. The agent retrieves these definitions and uses them verbatim rather than deriving them.

Entity relationship map. The correct join conditions between tables, expressed as bidirectional relationships with join type, key columns, and any filter conditions on the join. “accounts JOIN contracts ON accounts.id = contracts.account_id WHERE contracts.status = ‘active’” — not just the column names, the full correct join condition.

Canonical entity resolution. A mapping from natural language entity references (“enterprise customers”, “US customers”, “paying users”) to their correct SQL filter expressions. This is where a lot of NL2SQL agents fail — the question says “enterprise customers” and the correct filter is a combination of segment tier AND minimum contract value AND account status that’s nowhere in the column names.

The Production Gap: What Still Breaks Even With an Ontology

An ontology layer dramatically improves NL2SQL reliability, but it does not eliminate all failure modes. It’s worth being honest about what still breaks.

Ontology staleness. The semantic layer is only as good as its last update. If the data team adds a new table, renames a column, or changes the definition of a metric, the ontology needs to be updated in sync — or the NL2SQL agent will use the old definition and generate wrong queries. This is an ongoing maintenance burden that teams frequently underestimate. The ontology is not a one-time artifact; it’s a living document that needs to evolve with the schema.

Coverage gaps. No ontology covers every possible question. Users will ask things that fall outside the defined terms and metrics, and the agent will fall back to schema-based inference — with all the failure modes that entails. Monitoring which questions fall outside ontology coverage, and using that signal to prioritize ontology expansion, is a necessary operational loop.

Complex multi-hop reasoning. Some questions require reasoning that even a well-structured ontology can’t short-circuit. “Which sales reps had the highest net revenue growth in Q2 but the lowest customer satisfaction scores?” requires correlating across at least three different data domains (revenue, quotas, support tickets), and the correct join path may involve intermediate tables that the ontology’s entity relationship map doesn’t anticipate. For these queries, even Google’s pipeline can produce wrong answers.

Prompt injection via user input. Natural language questions from users can contain inadvertent (or deliberate) content that distorts the SQL generation — questions that reference tables that shouldn’t be accessible, filter conditions that bypass intended restrictions, or phrasing that causes the model to ignore ontology grounding in favor of direct schema inference. Sanitization and guardrails at the input layer are necessary, especially for enterprise deployments.

What to Build If You’re Doing This Today

The practical implication of all of this is that NL-to-SQL is an engineering problem, not a prompting problem. Getting it right in production requires three investments that most teams resist making until they’ve shipped a half-working prototype and seen the failure modes in production.

Invest in the semantic layer first, not last. Most teams build the NL2SQL pipeline first and bolt on the ontology after they hit the semantic failure modes. This is backwards. The semantic layer should be the foundation — even a lightweight business glossary document is enough to start. Build the retrieval pipeline around it from day one, and you’ll avoid rewriting the prompt architecture three times.

Instrument for semantic failures, not just execution failures. An NL2SQL agent that returns wrong answers without errors is worse than one that fails loudly. You need instrumentation that captures the question, the retrieved semantic context, the generated SQL, and the query result — and periodic human review to catch semantic failures that don’t surface as exceptions. Eval datasets of known question/answer pairs, with expected SQL, are the only reliable way to measure this.

Own the ontology with the data team, not the ML team. The knowledge about what your data means lives with the analysts and data engineers who built it. The NL2SQL ontology should be maintained in the same workflow as your data documentation — not in a separate AI system owned by the ML team. The closer the ontology is to the source of truth about your data semantics, the more likely it is to stay accurate over time.

Google’s BigQuery + Gemini architecture is one of the most sophisticated public implementations of this approach, and it still has gaps. That’s not a criticism — it’s an honest signal about the difficulty of the problem. Natural language is imprecise by design, and enterprise schemas are complicated by history. The ontology layer is the mechanism that mediates between the two, and building it well is the difference between an NL2SQL agent that your data team trusts and one that your data team disables after a month because it kept returning wrong revenue numbers to the CFO.

The technology stack — Gemini, BigQuery, Vertex AI, embeddings, semantic retrieval — is available to everyone now. The semantic layer is the hard part. Start there.