SR 26-2 Blew a Hole in Bank AI Governance. Now Every Model Risk Team Has to Fill It.

Federal regulators just rewrote SR 11-7 — and explicitly excluded generative AI and agentic AI from the new framework. Banks are now deploying their most powerful AI systems without a regulatory rulebook, and the internal governance vacuum is the real risk story.

Table of Contents

On April 17, 2026, the Federal Reserve, OCC, and FDIC did something unusual for banking regulators: they issued new guidance and explicitly told banks they were on their own for the most important part of it.

SR 26-2 — the interagency update to model risk management that formally retired SR 11-7 after fifteen years of service — is a substantive rewrite. It modernizes the framework, adds proportionality language for smaller institutions, and streamlines validation requirements for lower-risk models. But buried in the scoping section is a sentence that should be hanging on the wall of every model risk team in the country: “Generative AI and agentic AI models are novel and rapidly evolving. As such, they are not within the scope of this guidance.”

Read that again. The banks most aggressively deploying LLMs for credit underwriting, document intelligence, fraud detection, and compliance automation now have a regulatory framework that explicitly does not cover their highest-stakes AI systems. The same week that Anthropic and FIS announced a partnership to build AI-driven financial crime monitoring, and OpenAI partnered with PwC on treasury and finance operations, the agencies drew a clean line: SR 26-2 governs your traditional models. Your generative AI? Good luck.

This is not, to be clear, a free pass. It’s a governance vacuum, and those are always more dangerous than the things they replace.

What SR 26-2 Actually Changed

The original SR 11-7 (2011) was a landmark. It established the three-pillar framework — model development and implementation, model validation, and governance and controls — that became the global reference architecture for financial model risk. It was designed for a world of statistical credit models, pricing models, and stress-testing engines: systems where you could define inputs, bound outputs, validate accuracy against held-out data, and review annually.

SR 26-2 keeps the spirit but abandons the prescriptive checklist aesthetic. The new guidance is explicitly principles-based, tailored by institution size and model risk profile, and applies primarily to banking organizations with over $30 billion in total assets. It introduces better language around tiered validation, vendor model oversight, and ongoing monitoring. It also acknowledges, finally, that model risk isn’t just about prediction accuracy — it’s about the full lifecycle from development through decommission.

What it doesn’t do is touch generative AI. Agencies cited the “novel and rapidly evolving” nature of the technology as justification for exclusion. An RFI — a request for information — is forthcoming. That means the regulatory framework for LLMs in banking is, at best, 18 to 36 months away from becoming guidance, and likely longer from becoming enforceable standards.

In the interim, banks are deploying anyway. And that’s the actual story.

The Governance Gap Is Structural, Not Just Procedural

Here’s what makes the SR 26-2 exclusion a genuinely hard problem rather than a paperwork inconvenience: the governance tools that SR 11-7 created don’t map to how large language models actually behave.

Traditional model governance is built around a set of assumptions that don’t hold for generative AI. Models have well-defined inputs and outputs. Validation means testing on a held-out dataset and measuring accuracy. Ongoing monitoring means tracking model performance metrics over time. Champion-challenger testing compares production against challenger candidates on the same objective function. Annual model reviews confirm stability.

None of this works for an LLM generating credit memo drafts, summarizing regulatory filings, or routing customer complaints. The output space is essentially unbounded. “Accuracy” is not a single number. Drift looks completely different — it’s semantic drift, behavioral drift, emergent capability drift — and you can’t catch it by watching AUC curves. A model that was perfectly aligned with policy last quarter can violate that policy this quarter without any change to weights, simply because the distribution of prompts shifted.

Agentic AI makes it worse. When your LLM is also taking actions — querying databases, executing transactions, filing reports, updating customer records — the validation problem is no longer about the model in isolation. It’s about the full decision-action pipeline under real-world conditions. Pre-deployment validation can’t catch runtime authorization failures. It can’t test edge cases that only emerge through multi-step tool use. The champion-challenger framework assumes two models competing for the same production slot; an agent orchestrating four specialized subagents across three systems doesn’t fit that model at all.

The Federal Reserve’s own Vice Chair for Supervision, Michelle Bowman, acknowledged this directly in a speech at the Financial Stability Oversight Council’s AI Roundtable on May 1. She noted that AI evolution “requires flexible response from bank regulators” — which is diplomatically phrased, but translates to: the standard toolkit doesn’t work here yet, and we know it.

What Banks Are Actually Doing

In the absence of regulatory guidance, the industry is not sitting still. Several patterns are emerging across institutions that are serious about gen AI governance.

The most common approach is building a parallel governance framework alongside SR 26-2 compliance. This means standing up separate AI review committees, establishing use-case risk classification taxonomies (low/medium/high-risk gen AI applications), and creating custom validation standards for LLM-based systems. Some institutions are adapting existing model inventory systems to include gen AI with new metadata fields: model type, prompt templates in scope, tool access, human oversight checkpoints.

On the validation side, the leading practice is building red-teaming pipelines as a core governance artifact. Rather than holdout-dataset accuracy, teams are running adversarial prompt suites, behavioral consistency tests, and policy-alignment evaluations before production deployment. Some are borrowing from software engineering — treating model behavior like software behavior, with regression test suites that run on each new model version or significant prompt change.

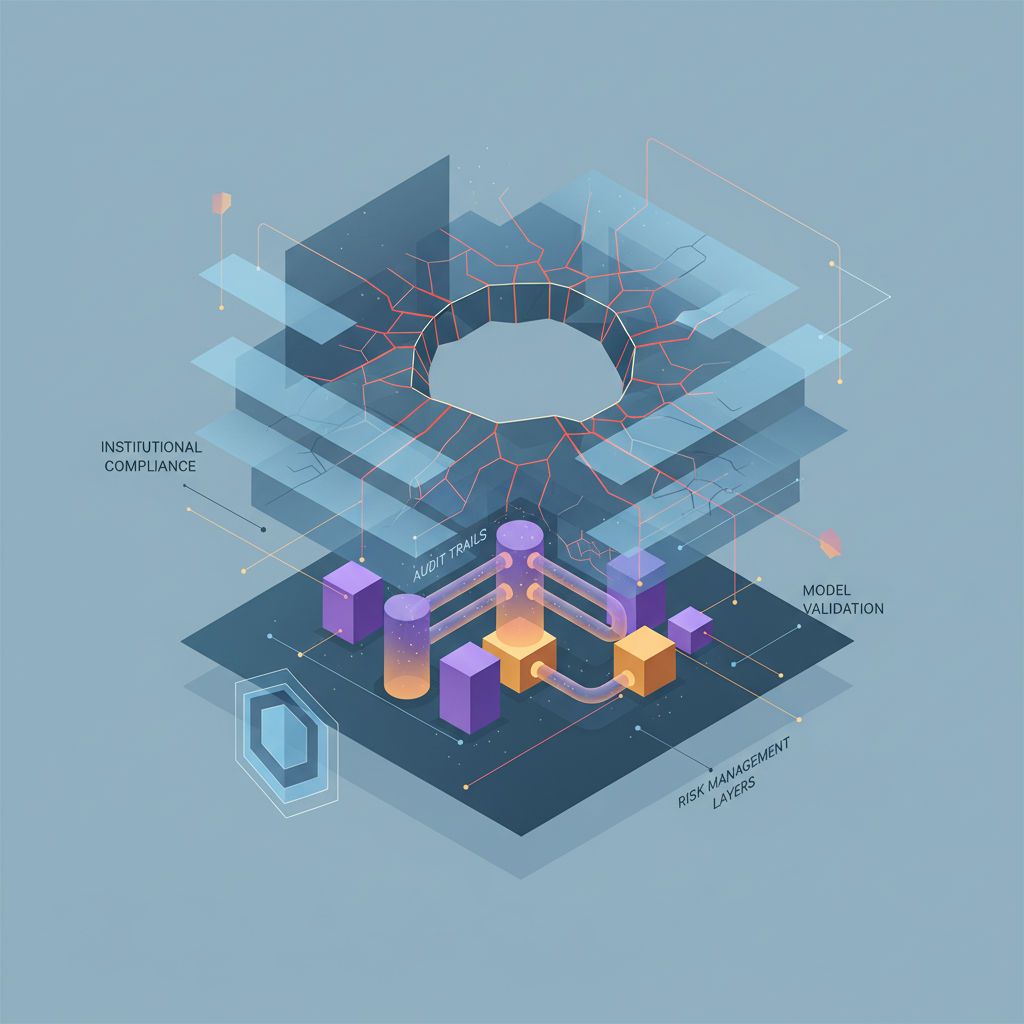

For agentic AI specifically, the governance problem is being tackled through runtime controls rather than pre-deployment validation. This means agent-level authorization layers (what tools can this agent call? under what conditions?), human-in-the-loop checkpoints for high-stakes actions, and audit logs that capture not just the model output but the full decision trace — inputs, retrieved context, tool calls, and final action. The goal is reconstructability: can you explain, after the fact, exactly why the agent did what it did? Regulators will eventually require this. Building it now is both good governance and good engineering.

The SuperML Take

The SR 26-2 scoping decision was the right call, even if the downstream consequences are uncomfortable. A regulatory framework written for stat models applied to agentic AI would have been worse than no framework — it would have created a false sense of compliance while missing every meaningful risk. The agencies deserve credit for recognizing that the old tools don’t fit the new problem.

But “out of regulatory scope” is not the same as “low risk,” and that distinction is going to cause real problems in the next 12 to 18 months. Banks are making large, visible bets on generative AI in credit, compliance, and financial crime. When something goes wrong — a discriminatory credit recommendation, a hallucinated regulatory filing, an agentic fraud system that flags the wrong pattern and causes customer harm — the first question examiners will ask is: what was your governance framework? The second question will be: why didn’t you have one?

The institutions that will be in the best position are the ones that don’t wait for the RFI. They’re building governance frameworks now, treating them as internal policy rather than regulatory compliance, and establishing audit trails that will hold up under scrutiny when the examiners finally do have something to say. This isn’t about being conservative — it’s about being defensible.

There’s also a competitive dimension that doesn’t get enough attention. Banks that build robust gen AI governance frameworks earn the internal credibility to move faster, not slower. When the business wants to deploy an LLM in a new use case, a mature governance process that can turn a risk assessment in two weeks is an accelerant. A vague governance vacuum where every new deployment triggers existential debates about what the model risk committee is supposed to do is the bottleneck. Build the framework now, and you get both risk management and deployment velocity.

What concerns me about the current landscape is the gap between what gets announced and what gets governed. Partnership announcements between AI vendors and banks are flowing fast — FIS/Anthropic, PwC/OpenAI, a dozen others in the pipeline. These partnerships are moving faster than the governance architecture underneath them. The vendors are incentivized to close deals. The banks are incentivized to show AI progress. The model risk teams are getting overruled by the pace of business ambition, and that’s exactly the configuration that produces embarrassing incidents at scale.

The 6-to-12 month view: expect the Fed’s RFI on generative AI governance to drop sometime in late 2026. When it does, the banks that have been running governed deployments will have concrete experience to contribute to the comment process — and will find that their existing frameworks translate reasonably well to whatever guidance eventually emerges. The banks that have been running ungoverned deployments will be scrambling to retrofit governance onto live systems under examiner scrutiny. That is a much worse place to be.

Architecture Impact

What changes in system design? Banks running LLMs in regulated workflows now need two parallel governance layers: SR 26-2-compliant model risk management for traditional models, and a separately designed framework for generative and agentic AI. This means bifurcated model inventories, separate validation standards, and distinct audit trail architectures. The challenge is that these systems often interact — a traditional credit model may feed inputs to an LLM that drafts the credit memo — so the governance boundary isn’t always clean at the system level.

What new failure mode appears? The critical failure mode is “governance theater” — banks building gen AI governance documentation that satisfies internal audit requirements without actually catching real risk. This manifests as: prompt templates in the model inventory but no behavioral regression testing, human-in-the-loop checkpoints on paper but no enforcement mechanism in the system, and use-case risk classifications that get approved at “low risk” because the reviewer doesn’t fully understand the deployment context. When an incident occurs, the documentation looks complete and the failure is invisible until after the fact.

What enterprise teams should evaluate:

- Model Risk / Validation teams: Build behavioral regression test suites for every LLM in production; establish what “validation” means for a system where output space is unbounded and accuracy is a policy question, not a metric.

- Legal / Compliance: Map current generative AI deployments against the SR 26-2 exclusion and document the internal governance framework that applies; this documentation will be the first thing examiners request.

- AI Engineering / MLOps: Instrument every agentic system with full decision-trace audit logging — inputs, retrieved context, tool calls, and final action — before the next examiner cycle, not after.

- Chief Risk Officers: Treat the absence of regulatory guidance as a governance mandate, not a governance holiday; institutions with mature internal frameworks will be in far better exam position when the RFI concludes.

Cost / latency / governance / reliability implications: Building a parallel gen AI governance framework requires meaningful upfront investment — dedicated governance tooling, red-teaming resources, audit logging infrastructure, and internal policy development. Rough estimates suggest a $1M–$5M uplift for large institutions building from scratch, though institutions that already invested in MLOps tooling can leverage significant overlap. Latency implications are primarily in the deployment pipeline, not inference: adding behavioral regression testing and governance review to each model or prompt-template change adds days to weeks to deployment cycles — manageable with the right tooling, but not zero. The reliability upside is substantial: institutions with runtime monitoring and behavioral regression testing detect distribution shifts and policy violations weeks earlier than those running unmonitored LLMs.

What to Watch

The Federal Reserve’s forthcoming RFI on generative and agentic AI governance is the primary signal to track. When it drops, the comment period will be the industry’s chance to shape what regulatory expectations actually look like — and the institutions that participated in pilot governance frameworks will have the most credible input. Watch also for OCC and FDIC examination memos on AI — informal examiner expectations often precede formal guidance by six to twelve months, and the exam findings on early gen AI deployments will tell you more about where regulatory expectations are headed than any speech.

On the vendor side, the emerging governance tooling market is worth watching carefully. ValidMind, Domino, Lumenova, and a dozen others are building SR 26-2-adjacent tooling that’s starting to extend into gen AI governance. The feature sets are nascent, but the tooling gap is real, and the category will consolidate fast once the regulatory expectations crystallize.

Finally, watch for the first high-profile gen AI compliance incident at a major bank. It hasn’t happened publicly yet, but the combination of aggressive deployment timelines, absent governance frameworks, and increasing LLM use in regulated decision-making is a configuration that produces incidents. When one surfaces, it will define the regulatory response far more than any RFI.

Sources

- SR 26-2: Revised Guidance on Model Risk Management — Federal Reserve

- OCC Issues Updated Model Risk Management Guidance

- Agencies Overhaul Model Risk Management Guidance for Banks: Here’s What Changed — Orrick

- SR 26-2: What Every Bank Needs to Know — ValidMind

- Fed and OCC Overhaul Bank Model Risk Rules but Leave AI Uncharted — AI2Work

- Speech by Vice Chair for Supervision Bowman: Artificial Intelligence in the Financial System — Federal Reserve, May 1, 2026

- Bowman: AI Evolution Requires Flexible Response from Bank Regulators — ABA Banking Journal

- Treasury Guidance Brings Urgency to AI Governance — Grant Thornton

- MAS Collaborates with Banking Industry to Harness AI in Fight Against Financial Crime — MAS, May 2026

- SR 11–7 to SR 26–2: From Checklist to Judgment in Model Risk Management — Medium

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.