OpenAI and Anthropic Adopted the Palantir Playbook. Now Enterprise Architecture Teams Need a Counter-Move.

When the two largest model labs simultaneously launched forward-deployed engineering ventures backed by Wall Street capital, they didn't just change how AI gets sold — they changed who owns your production AI architecture. Here's what that means for engineering teams trying to stay in control.

Table of Contents

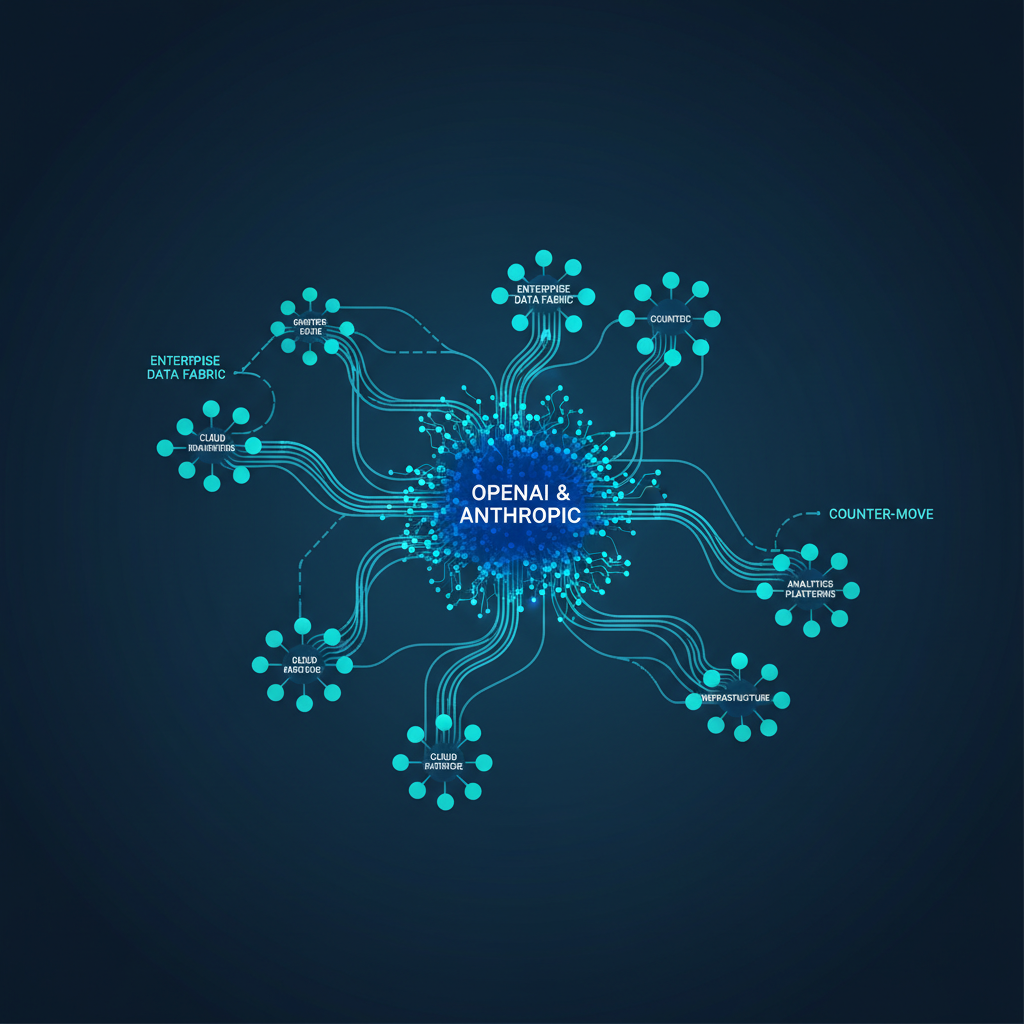

On Monday, May 4, 2026, two things happened in enterprise AI that almost nobody in the architecture community has fully internalized yet. Anthropic closed a $1.5 billion joint venture with Blackstone, Hellman & Friedman, and Goldman Sachs to deliver hands-on AI implementation services to enterprise clients. OpenAI announced a competing entity — The Development Company — raising $4 billion from 19 institutional investors against a $10 billion valuation. Same week. No investor overlap. The two largest model labs carved Wall Street’s enterprise AI services market into two non-overlapping spheres of influence in about 48 hours.

This isn’t a sales and marketing story. It’s an architecture story, and most engineering teams haven’t processed it that way yet.

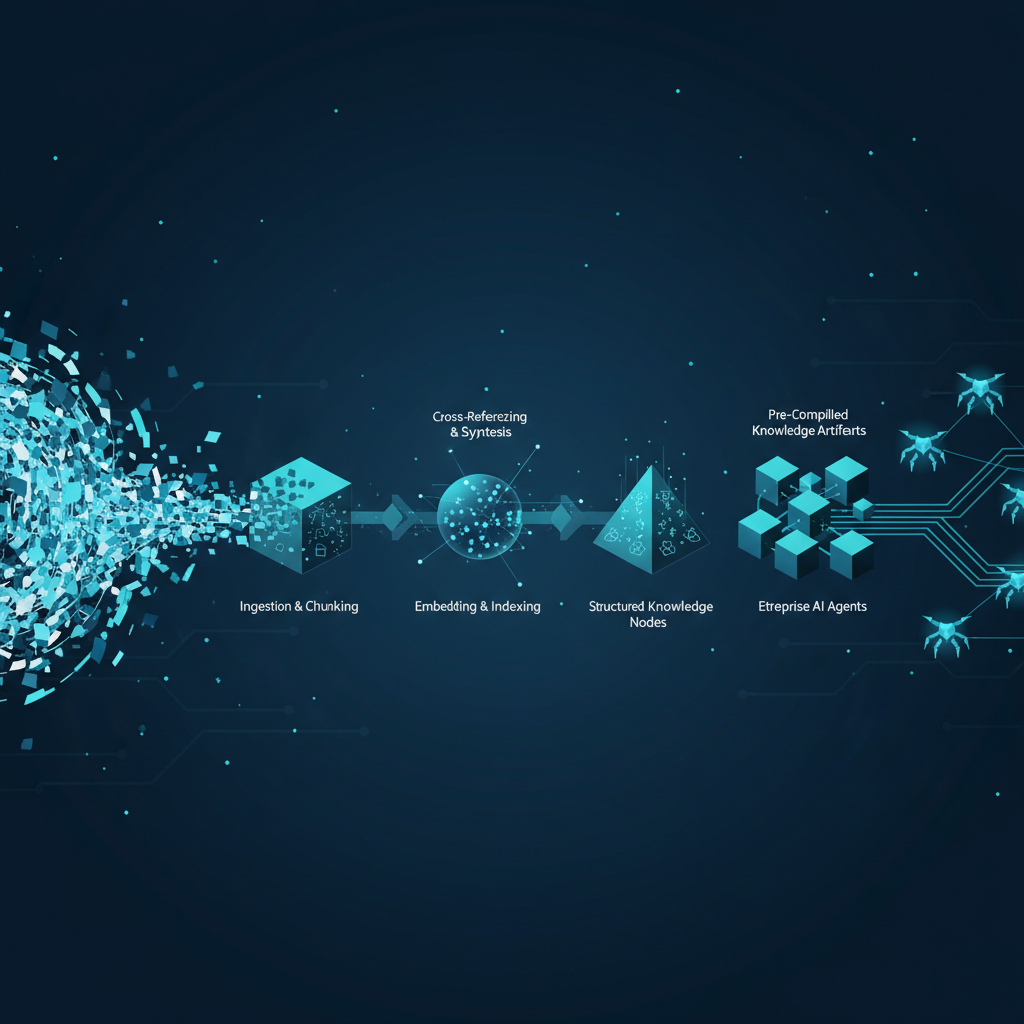

Both companies are explicitly adopting the forward-deployed engineer (FDE) model that Palantir built its first decade on. You embed your engineers inside the customer’s organization. They build the integrations, the pipelines, the agent workflows, the evaluation harnesses. They deliver production artifacts — MCP servers, subagent topologies, skill definitions, connector configurations. The client gets a working system. The vendor gets something more valuable than a contract: they get architectural dependency that compounds over time.

Anthropic’s financial services launch the following day made this concrete. The company shipped roughly ten pre-built AI agents for finance workflows, each delivered as a “reference architecture” — a complete template with connectors, subagent coordination logic, skill definitions, and deployment configuration. FIS, Moody’s, and several unnamed tier-one banks were named as design partners. Claude Opus 4.7, tuned specifically for financial reasoning tasks, powers the stack. Microsoft 365 integration is included. The whole package is designed to be operable in weeks, not quarters.

That “reference architecture” framing is worth pausing on. When Anthropic ships you a reference architecture, they’re not just giving you a starting point — they’re giving you a production system scaffolded around their model’s specific behavioral characteristics, their MCP tool definitions, their connector protocol versions, and their evaluation criteria. The architecture encodes assumptions about how Claude responds to prompts, which tool calls it prefers, how it handles multi-step task delegation, and where it needs human-in-the-loop intervention. None of those assumptions transfer cleanly to a different model provider. You don’t migrate off that architecture the way you migrate off a database. You rewrite it.

What Forward-Deployed AI Actually Means in Production

Palantir’s FDE model worked because their core product — Gotham, Foundry — was genuinely difficult to implement without institutional knowledge. FDEs weren’t salespeople with technical skills; they were the implementation layer for a platform complex enough to require embedded expertise. The product and the service were inseparable. Clients that tried to do it themselves typically struggled because the platform’s data ontology and workflow engine required pattern knowledge that Palantir deliberately kept inside its deployment teams.

Anthropic and OpenAI are replicating this structure at a moment when enterprise AI is genuinely difficult to implement well. The gap between an agent that demos in a sandbox and an agent that handles live production data at scale is enormous — and most internal enterprise AI teams haven’t closed that gap. FDEs with deep model-specific knowledge can close it faster. That’s the value proposition, and it’s real.

But the production consequences are what matter. When an Anthropic FDE builds your document intelligence workflow, they’re making hundreds of micro-decisions about architecture: how context is assembled before each Claude invocation, what tool schemas are exposed to the agent, how retrieval is structured, where output validation happens, how errors are surfaced to human reviewers. These decisions get encoded into your production codebase. Six months later, the FDE rotation changes. The new team members consult Anthropic’s internal documentation, which is organized around Claude’s architecture. Your system is now maintained by people whose expertise is Claude-specific. That’s not an accident — that’s the model.

Gartner’s prediction, quoted in several post-announcement analyses, is striking: by 2028, 70% of enterprises will be “forced to abandon agentic AI solutions from FDE-led engagements because of high vendor costs and lack of internal skills to evolve them independently.” The failure mode isn’t a security breach or a model misbehavior event. It’s a quiet inability to adapt — a production system that works but that your internal team can’t modify without re-engaging the vendor. You’ve traded operational flexibility for implementation speed.

The Reference Architecture Trap

The pre-built agent pattern deserves specific attention because it’s where architectural lock-in happens fastest. Anthropic’s ten financial service agents are positioned as starting points — configurable templates that enterprises adapt to their specific workflows. In practice, reference architectures in enterprise software rarely stay “reference.” They become the production system. The integration team adapts them to fit the real data, the legal and compliance team adds constraint logic, the ops team adds monitoring hooks. Eighteen months later, you have a heavily modified version of Anthropic’s template that depends on:

- Claude’s specific tool-calling behavior under ambiguous instructions

- Anthropic’s MCP connector definitions for the data sources in scope

- The skill definitions and subagent delegation patterns in the template

- The evaluation rubrics embedded in the template’s quality checks

- The observability hooks that send telemetry to whatever monitoring stack Anthropic’s FDEs chose

Each of these is a Claude-specific implementation decision. The system works because Claude behaves predictably within these constraints. That predictability is Claude-specific. A future Gemini or GPT-5.x integration requires re-validating every one of those behavioral assumptions.

This matters because the model landscape is not stable. The economics of running large models at enterprise scale are still evolving. The model that makes sense for a workflow today may not be the right economic choice in 18 months. Organizations that own their agent architecture can adapt. Organizations whose agent architecture was built by FDEs around a specific model’s behavioral characteristics often can’t — at least not without re-engagement and non-trivial cost.

The Counter-Move

Enterprise architecture teams that engage with either of these ventures need a specific set of contractual and technical commitments before any FDE engagement goes to production. These aren’t vendor negotiation tactics — they’re architectural hygiene requirements.

First, every FDE-delivered artifact needs to be owned in full by the enterprise from day one. Source code, MCP server definitions, tool schemas, evaluation datasets, monitoring dashboards — all of it under the enterprise’s version control, not the vendor’s. The FDE model often defaults to vendor-hosted artifact management as a “convenience.” Don’t accept it.

Second, the architecture review process for FDE-built systems needs to include explicit abstraction layer requirements. The model invocation layer should be isolated behind an interface that can swap providers. This doesn’t mean building a perfect model-agnostic abstraction (that’s usually a waste of engineering time in early-stage systems), but it means not letting Claude-specific prompt formatting, tool-calling conventions, or context assembly patterns bleed into business logic.

Third, internal engineers need to be in the room for every FDE implementation session — not as observers, but as co-implementers. The knowledge transfer problem that Gartner’s prediction is pointing at is real, and it only gets solved if your team builds institutional memory about the system’s architecture in parallel with the FDE team building the system itself. FDE engagements that treat internal engineers as an audience instead of collaborators systematically create dependency.

Fourth, plan the model provider diversification strategy before you need it. If you’re running production agentic workflows on Claude via an Anthropic FDE engagement, you should know in advance which workflows are candidates for OpenAI models, which are candidates for open-weight models like Llama or Mistral, and which are appropriately locked to Claude because of model-specific capabilities that matter for the task. That analysis is much easier to do before lock-in than after.

The SuperML Take

Let’s be direct about what happened this week: the two largest model labs simultaneously decided that selling API access isn’t enough. They’re moving up the value chain into professional services, implementation, and operational support. That’s rational from a business perspective — the margin on services is higher, the switching cost for clients is much higher, and the distribution channel (investor-portfolio company access) is clever in its structure. OpenAI’s $10B valuation on The Development Company suggests the market agrees this is a good business.

What it means for enterprise AI teams is harder to process but important to name. You are now being sold a service that looks like infrastructure but behaves like professional services lock-in. The “reference architectures” and pre-built agents are excellent products in the short term — they solve real implementation problems, they encode domain expertise that your internal team doesn’t have yet, and they get production systems running in weeks instead of quarters. That’s genuinely valuable.

The problem is the 18-month view. The organizations that will retain architectural control of their AI systems are the ones that treat FDE engagements as knowledge transfer programs, not outsourcing programs. They staff them with internal engineers who are learning the system as it’s built. They insist on artifact ownership from day one. They build the abstraction layers that allow model substitution even when the current model is performing well. They run internal evaluations in parallel with vendor-provided evaluations, so they understand their system’s behavior independent of the vendor’s framing.

The organizations that won’t retain architectural control are the ones that say “we don’t have the internal capacity right now, so we’ll let the FDE team own it and we’ll build capacity later.” Later almost never comes. By the time internal capacity exists, the system is in production, it’s working, there’s no budget for a rebuild, and the vendor is the only team that knows how it works. That’s not an AI problem — that’s a decades-old enterprise software problem, and it’s playing out again at scale in agentic AI.

The Palantir playbook is real, it’s proven, and it works. OpenAI and Anthropic adopting it isn’t surprising. What would be surprising is enterprise architecture teams failing to learn from the companies that have been navigating it for 20 years — and the ones that learned the hard way how much it costs to exit a deeply embedded vendor relationship.

One more thing worth noting: the non-overlapping investor structure between the two ventures isn’t coincidence. It’s cartel logic, friendly edition. OpenAI’s 19 investors and Anthropic’s three founding partners have no overlap. The portfolio companies in each investor’s orbit will encounter only one vendor pushing the FDE model. That reduces competitive pressure during the sales cycle and reduces the client’s ability to negotiate based on competitive alternatives. If you’re a portfolio company of any investor named in either venture, you should assume that the AI services pitch is coming and that the terms will be less favorable than what an open-market negotiation would produce. Get independent architectural advice before that conversation starts.

Architecture Impact

What changes in system design? Enterprise AI architectures built around FDE-delivered agent systems encode model-specific behavioral assumptions at every layer: prompt formatting, tool schema design, context assembly, error handling, and evaluation criteria all reflect the deploying vendor’s model. This fundamentally changes the risk profile of multi-year AI architecture commitments — the dependency is not just on model access but on model-specific behavioral stability across versions.

What new failure mode appears? The new failure mode is architectural immobility: production agentic systems that work correctly but cannot be modified, extended, or migrated without re-engaging the original vendor. This failure mode does not trigger alerts or produce error logs — it becomes visible only when the enterprise attempts to evolve the system and discovers that the internal team lacks the architecture knowledge to do so independently. By that point, switching costs are prohibitive.

What enterprise teams should evaluate:

- Platform engineering teams: Audit every FDE-delivered artifact for Claude-specific behavioral assumptions in prompt templates, tool definitions, and evaluation rubrics — document which components would require rewriting for a different model provider.

- AI architecture leads: Require explicit model abstraction layer specifications before any FDE engagement sign-off; the interface between business logic and model invocation must be formally defined and version-controlled.

- Procurement and legal teams: Negotiate full artifact ownership, source escrow provisions, and internal skills transfer milestones as contractual deliverables, not aspirational outcomes, before FDE engagement begins.

- Internal ML/AI engineering teams: Mandate co-implementation participation (not observer status) in all FDE sessions — knowledge transfer only happens through doing, not watching.

Cost / latency / governance / reliability implications: FDE-built systems running on hosted model endpoints add 40–120ms per inference call compared to self-hosted alternatives, with costs running $15–80 per 1,000 agent steps depending on model tier and context window utilization. The governance implication is more significant: if FDE-delivered artifacts include the observability and audit infrastructure, the enterprise may not have independent access to the behavioral logs needed to satisfy AI governance requirements — a critical gap as the EU AI Act’s agentic AI provisions come into effect in Q4 2026.

What to Watch

The competitive dynamics of the FDE model will become clearer over the next two quarters. Watch for which enterprises publicly report governance conflicts with FDE-delivered architectures — these will likely first surface in regulated industries (financial services, healthcare) where audit trail ownership is a compliance requirement, not a preference. Watch for whether open-weight model providers (Meta, Mistral, Cohere) move to offer competing FDE-style services as a “model-agnostic” alternative — that would directly undercut the lock-in thesis and force Anthropic and OpenAI to compete on architectural openness rather than implementation speed. And watch for the first major enterprise that publicly cites FDE lock-in as a reason for a model migration project — that case study will be the one every enterprise architecture team cites in vendor negotiations for the next five years.

Sources

- Anthropic and OpenAI are both launching joint ventures for enterprise AI services — TechCrunch

- Anthropic deepens push into Wall Street with new AI agents, full Microsoft 365 integration, Moody’s data partnership — Fortune

- OpenAI, Anthropic expand services push, signaling new phase in enterprise AI race — CIO

- Anthropic’s financial agents expose forward-deployed engineers as new AI limiting factor — CIO

- Anthropic teams with Goldman, Blackstone and others on $1.5 billion AI venture — CNBC

- The Palantir Model That Anthropic and OpenAI Are Now Copying — Revolution in AI

- Enterprise AI Agent Playbook: What Anthropic and OpenAI Reveal About Building Production-Ready Systems — WorkOS

- Anthropic and OpenAI establish joint ventures on Wall Street — SiliconANGLE

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.