Five AI Vendors Shipped Agent Registries in One Quarter — That's Not Competition, It's a Production Crisis Signal

When Microsoft, AWS, Google, ServiceNow, and Okta all ship 'agent registries' within weeks of each other, enterprise architects need to read that convergence carefully — because the agent inventory problem is now a compliance deadline, not a backlog item.

Table of Contents

There is a specific kind of industry signal that senior engineers learn to read carefully: when five or more major vendors ship near-identical products within the same quarter, it is never coincidence. It means something already broke in production at enough large enterprises that multiple platform teams independently concluded “we need to build this.” That is precisely what happened with AI agent registries in Q1–Q2 2026 — and if your organization is past a dozen deployed agents, the signal is addressed to you.

Microsoft took Agent 365 to general availability on May 1, 2026. AWS launched the Bedrock Agent Registry into public preview in April. Google absorbed Agentspace into the Gemini Enterprise Agent Platform — which ships with its own Agent Registry, Agent Identity, Agent Gateway, and Agent Observability modules — at Cloud Next in late April. ServiceNow launched AI Control Tower. Okta launched Okta for AI Agents. Kong extended its API governance toolchain to cover MCP servers. Collibra applied data governance principles to the agent problem.

Six months ago, none of these products existed in shipping form. Today they all do, from six different vendors, targeting the same enterprise buyer profile. That is a market convergence event, not a product cycle.

The Agent Sprawl Problem, Quantified

The numbers behind the registry race are not marketing projections. According to Google’s own 2026 AI agent survey, the average enterprise organization is already running twelve AI agents. Gartner puts adoption at 97% of executives reporting at least one production agent deployment in the past year. What the same research shows is that fewer than one in five of those organizations has a mature governance model for what those agents are doing.

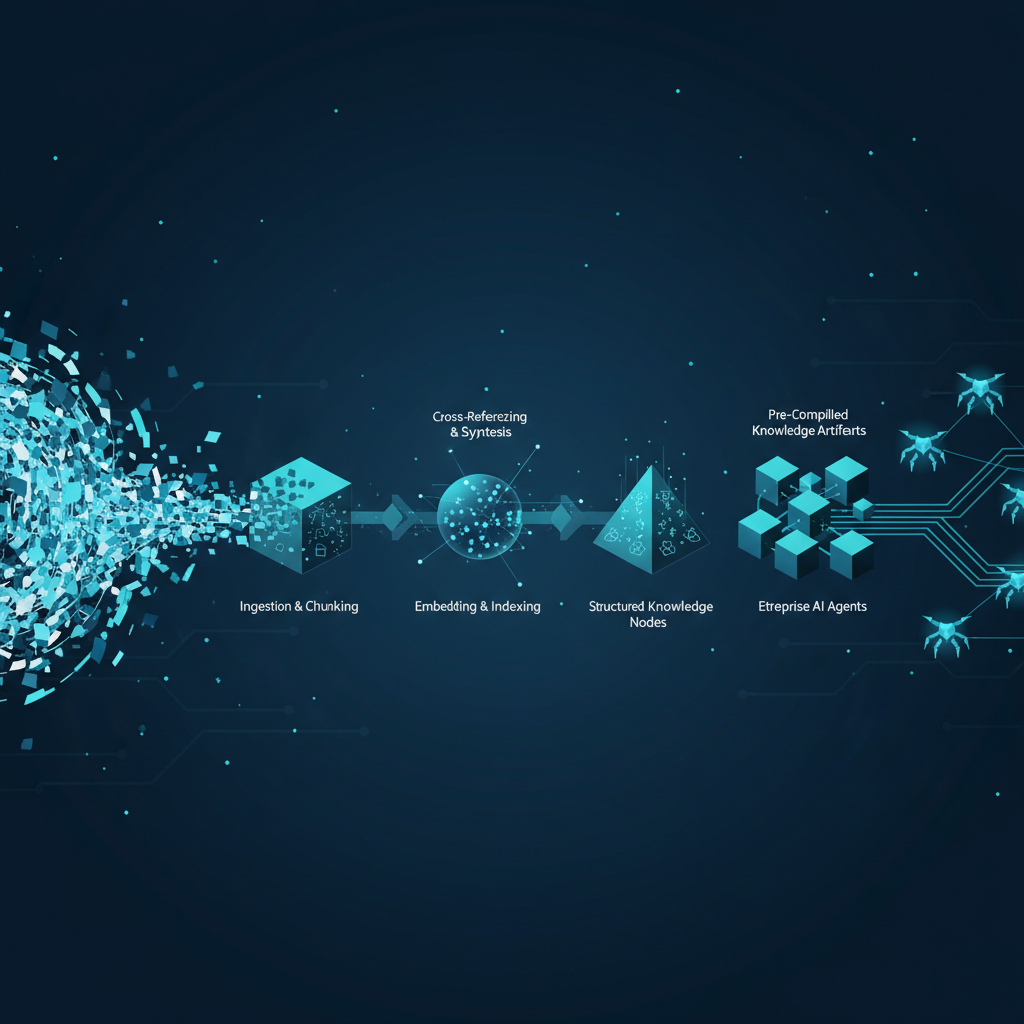

This is the structural failure: agents are dramatically easier to build than traditional applications. A developer with API access to an LLM and a few MCP server connections can ship an autonomous agent in an afternoon. The same developer cannot retroactively create an inventory of who built it, what data it accesses, what external systems it calls, what its failure mode is when an upstream API changes, or who is responsible for deprecating it when the original builder leaves the team.

The problem compounds at scale. In a large enterprise, agents are being spun up across business units, embedded silently into SaaS platforms (Salesforce Agentforce agents, Microsoft Copilot extensions, Workday AI workers), integrated via APIs that bypass IT entirely, and built in-house by development teams optimizing for velocity. The agents themselves are often stateful, long-running, and connected to production data systems — meaning they are not demos or experiments. They are running code touching live databases, customer records, and external APIs, with no centralized catalog, no owner manifest, and no runtime policy enforcement.

The CISO equivalent of this situation for APIs played out between 2015 and 2019. It took enterprises roughly four years to build mature API governance after REST APIs proliferated. Agents are proliferating at a faster rate, into more sensitive systems, and the governance gap is closing on a compressed timeline driven by regulatory pressure rather than internal discipline.

The Registry Race: What Each Platform Actually Ships

The nuances between these products matter for architecture decisions, so it is worth being specific about what each one actually does in its current state.

Microsoft Agent 365 is the most complete offering in terms of cross-cloud reach. At GA, it provides discovery and management of agents running on Azure, inventory sync with locally running Windows-based agents, and — critically — public preview of registry sync with AWS Bedrock and Google Cloud’s Gemini Enterprise Agent Platform. This means a Microsoft 365 admin can theoretically get a unified view of agents across all three major hyperscalers from a single console. The catch is that “registry sync” is exactly what it says: inventory and basic lifecycle controls (discover, start, stop, delete). It does not intercept or inspect what agents are doing at runtime on third-party clouds. Pricing is $15 per user per month standalone or bundled in the M365 E7 SKU.

AWS Bedrock Agent Registry is part of Amazon Bedrock AgentCore, covering not just agents but MCP servers, tools, and agent skills. It enforces an approval lifecycle: assets move from draft to pending approval to discoverable organization-wide, with IAM policies governing who can register versus who can discover. This is meaningfully closer to a software supply chain model than a simple directory. AWS agents registered here get provenance tracking that is auditable — important for SR 11-7 compliance claims.

Google Gemini Enterprise Agent Platform (the Vertex AI rebrand) goes the furthest in integrated governance surface area, shipping Agent Registry alongside Agent Identity (agents as managed service accounts with scoped permissions), Agent Gateway (policy enforcement at the edge of agent calls), and Agent Observability (trace-level logging). The A2A protocol v1.0, now native in LangGraph, CrewAI, LlamaIndex, Semantic Kernel, and AutoGen, enables cross-platform agent handoffs that are theoretically observable end-to-end — though in practice the observability is only as good as what each platform exports.

ServiceNow AI Control Tower approaches the problem from the compliance and workflow angle rather than the infrastructure angle. It is built around business process governance: approval workflows for agent deployment, risk scoring, escalation paths when agents exceed defined action boundaries. For enterprises already running ServiceNow as their IT service management backbone, this is the most natural integration point.

Okta for AI Agents attacks the identity layer, treating agents as non-human identities that need the same credential lifecycle management, MFA equivalents (challenge flows for sensitive actions), and access reviews that human users go through. This is the most focused and arguably most production-critical capability in the short term — an agent with a stale credential or an over-permissioned service account is already a live security finding, not a theoretical risk.

What a Registry Actually Buys You (And What It Doesn’t)

This is the part the vendor slide decks elide. An agent registry solves the visibility problem. It gives you a manifest: these agents exist, they are registered to these owners, they were last checked at this timestamp. What it does not solve — and what none of these products currently ship in production-ready form — is runtime policy enforcement at the action level.

Knowing an agent exists is different from knowing what it does on a given Tuesday when a particular edge case triggers. Current registry products tell you an agent is running; they do not tell you that the agent made 847 calls to a payments API last night because a retrieval step fed it a malformed customer segment. They do not alert you when an agent that was registered with “read-only finance data access” is being used by a new workflow that passes it write credentials through a tool call. They do not catch the prompt injection that causes an otherwise well-behaved agent to exfiltrate data through an outbound webhook.

The registry layer is a necessary but insufficient condition for enterprise AI governance. The sufficient condition requires runtime observability at the tool call and output level — which is closer to what LLM gateway products like Portkey, LangSmith Enterprise, and Weights & Biases Weave are building, not what the hyperscaler registries ship today.

The practical implication is that enterprise AI architects need to treat the registry as Layer 1 of a two-layer governance stack. Layer 1 is inventory and identity (who built this agent, what credentials does it hold, is it approved for production). Layer 2 is runtime behavioral monitoring (what is this agent actually doing, does that match its stated purpose, who gets alerted when it doesn’t). Organizations that ship Layer 1 and declare governance solved are going to discover their agents have been misbehaving in ways no one noticed precisely because the registry gave them a false sense of control.

The SuperML Take

The convergence of five agent governance products in a single quarter is an industry signal about what is actively breaking in production, not what is theoretically possible. Enterprise AI teams should read it as confirmation that agent sprawl has crossed from engineering inconvenience to operational liability — and that the window for building governance-by-design rather than governance-by-retrofit is closing.

The production-ready version of the agent registry story is significantly more complex than the press release version. The press releases describe platforms that discover and manage agents. The production reality is that most enterprise agent sprawl is not well-formed Bedrock agents or registered Vertex AI deployments — it is ad hoc LangGraph workflows running in someone’s cloud function, AutoGen pipelines deployed in an internal Kubernetes namespace with no service account, Zapier AI agents wired to production CRMs, and embedded agents in SaaS tools that the platform teams never consented to. Registries that require explicit registration will only catch the agents that well-behaved teams voluntarily submit. The agents creating the actual risk are the ones that won’t show up in any registry.

What a senior platform engineer, AI architect, or enterprise AI leader should do with this is: first, run an agent inventory before selecting a governance product. The inventory will be more alarming than expected. Second, treat the registry decision as a long-term platform commitment — these registries are becoming the control plane for your agentic infrastructure the same way IAM became the control plane for cloud access. Picking Microsoft Agent 365 locks you into a particular observability and policy enforcement model. Picking AWS Bedrock AgentCore locks you into another. The inter-registry sync that Microsoft is previewing is promising but is not yet bidirectional policy enforcement — do not architect around capabilities that are in preview.

The six-to-twelve month outlook is that runtime behavioral monitoring becomes the next battleground. The vendors who currently lead on registry completeness (Google’s integrated stack is the most sophisticated today) will face pressure from observability-native companies that can deliver runtime policy enforcement without requiring agents to be deployed on a specific platform. Watch for LangSmith Enterprise, Weights & Biases Weave, and Arize Phoenix to move toward governance features aggressively in the second half of 2026. The registry layer will commoditize. Runtime behavioral enforcement will be where differentiation lands.

The governance gap between what enterprises think they have and what is actually running in production is currently the highest-severity unaddressed risk in enterprise AI. The registries are a good first step. They are not a solution.

Architecture Impact

What changes in system design?

Agent lifecycle management now needs to be designed with the same rigor as microservice lifecycle management: registration at deployment, IAM-scoped credentials with minimum necessary privilege, an approval workflow that gates production promotion, a health check surface, and a deprecation path with owner notification. Any agent that can be deployed without a registry entry should be treated as an architectural anti-pattern by the same logic that any API endpoint without a gateway is an anti-pattern.

What new failure mode appears?

Registry theater is the primary failure mode to design against: organizations invest in building a registry, achieve 70–80% agent coverage for well-behaved teams, and then treat that coverage as equivalent to governance completeness. The 20–30% of agents that are not registered — typically the highest-risk ones built outside of formal engineering processes — are invisible to the registry and to the compliance audit. A second failure mode is registry staleness: agent registries that are not integrated into CI/CD pipelines will fall behind actual deployment state within weeks of launch, turning the registry into a historical artifact rather than a live control plane.

What enterprise teams should evaluate:

- Platform engineering teams: Agent registration must be added to the CI/CD pipeline as a mandatory step, not an opt-in practice — evaluate whether your chosen registry exposes an API that supports automated registration at deploy time, not just manual submission.

- Security and CISO teams: Assess the gap between what your registry can see (agent inventory, declared permissions) and what it cannot see (agent runtime behavior, tool call sequences, outbound data flows) — this gap is where your current AI-related security exposure lives.

- Compliance and risk teams: EU AI Act Article 13 transparency requirements and SR 11-7 model risk governance both require more than an inventory — they require documented model purpose, scope, monitoring, and incident response procedures; validate that your registry captures or links to that metadata, not just existence records.

Cost / latency / governance / reliability implications:

Microsoft Agent 365 at $15/user/month represents $900K annually for a 5,000-user organization before any underlying compute costs. Multi-cloud organizations paying for Agent 365 plus Bedrock AgentCore plus Google’s governance layer face compounding governance overhead on top of agent compute costs — governance sprawl is a real budget line item. On the reliability side, the registry itself becomes a dependency: if your agent deployment pipeline requires a registry entry before promotion, a registry outage becomes a deployment blocker; this needs to be designed with fallback paths and registry availability SLAs that match your deployment frequency requirements.

What to Watch

The inter-registry sync capability in Microsoft Agent 365 is currently in public preview and is read-only — it is the most important feature to watch as it moves toward GA. Bidirectional policy enforcement across cloud boundaries (applying a Microsoft-defined policy to an agent running on Google Cloud) would represent a genuine architectural shift in enterprise AI governance. Watch the AWS re:Inforce sessions and Google Cloud Security Summit announcements in Q3 2026 for whether any of the three hyperscalers moves toward cross-cloud runtime behavioral enforcement rather than just inventory sync.

The Okta for AI Agents identity layer is the sleeper play. Credential lifecycle management for non-human agents — rotation, scoping, access review, revocation — is a solved problem for human users but almost entirely unaddressed for agents. As agents accumulate production credentials (database connections, payment API keys, customer PII access), the identity attack surface grows. An agent with a compromised credential is indistinguishable from a legitimate agent call. Watch Okta’s roadmap for behavioral anomaly detection on agent credentials, which would close the gap between registry governance and runtime security.

Finally, track the EU AI Act enforcement posture as it matures past its August 2026 deadline. The transparency and documentation requirements for high-risk AI applications will force organizations to operationalize agent inventories as compliance artifacts, not just engineering practices. That regulatory forcing function will drive registry adoption faster than any vendor sales motion.

Sources

- Microsoft Agent 365 GA announcement — Microsoft Security Blog

- Microsoft takes Agent 365 out of preview as shadow AI becomes an enterprise threat — VentureBeat

- AWS Launches Agent Registry in Preview to Govern AI Agent Sprawl — InfoQ

- AWS targets AI agent sprawl with new Bedrock Agent Registry — InfoWorld

- Google Cloud Next 2026: A2A protocol, Workspace Studio, and the full-stack bet — The Next Web

- Introducing Gemini Enterprise Agent Platform — Google Cloud Blog

- Microsoft, Google push AI agent governance into enterprise IT mainstream — Computerworld

- Your AI Agents Are Already Inside the Perimeter — The Hacker News

- State of Agent Engineering 2026 — LangChain