CommBank's Fraud Agent Now Writes Its Own Detection Rules — The Architecture Shift Behind a 20% Drop in Fraud Losses

Commonwealth Bank deployed an agentic AI system that doesn't just flag fraud — it generates new detection rules in real-time, then hands them to humans for approval. The 20% fraud loss reduction is real. So is the governance architecture required to make it not a liability.

Table of Contents

There is a specific kind of AI deployment that separates fraud teams into two cohorts: teams that detect known fraud faster, and teams that find fraud patterns nobody had named yet. Commonwealth Bank just published numbers on a system that does the second thing — and the production details are worth unpacking beyond the press release.

In late April 2026, CommBank announced an agentic AI system monitoring more than 80 million signals per day across its transaction, card, online payment, and digital banking channels. The system does what most ML fraud platforms do: it watches for anomalies, clusters patterns, assesses severity. What makes it different is the next step. When it identifies a new threat pattern, it doesn’t just alert a human analyst. It proposes a new detection rule — a concrete, implementable rule that the fraud analytics team can review, adjust, and deploy. The agent produced or updated three-quarters of CommBank’s card fraud rules. Fraud losses dropped more than 20% in the first half of FY2026 compared to the same period in FY2025.

That is a meaningful production result. It is also an architecture with specific requirements, specific failure modes, and a governance structure that is doing real work — not just compliance theater.

Why Detection-to-Rule-Generation Is a Different Problem

Traditional ML fraud detection is a classification problem at its core. You build a model that scores transactions, set a threshold, trigger a review queue. The model gets retrained on labeled fraud data. The feedback loop is: fraud happens → analysts label it → model learns → detection improves, with latency measured in weeks or months.

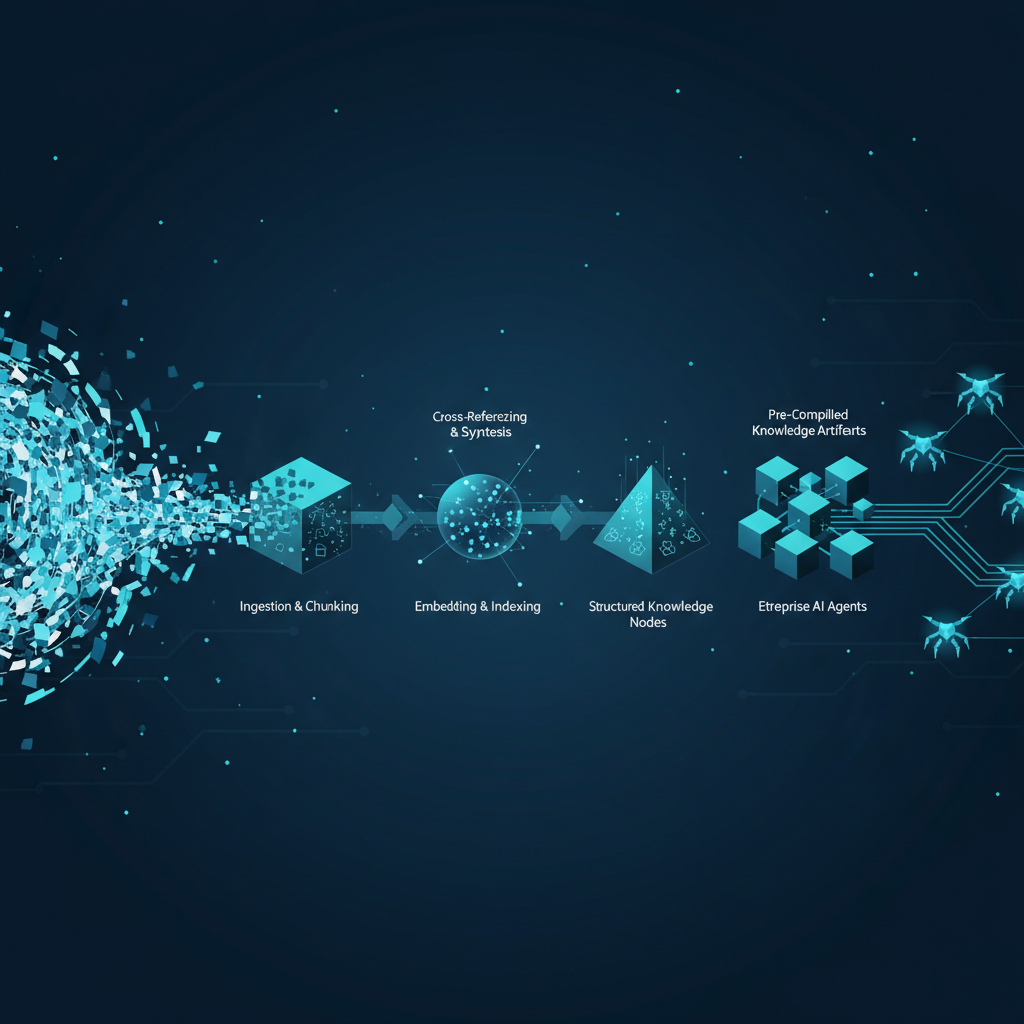

CommBank’s architecture collapses part of that loop. The agent observes 80 million daily signals, surfaces a new cluster of suspicious behavior, assesses severity and context, and drafts a detection rule. That rule goes to the fraud analytics team for human review and approval before deployment. The feedback loop becomes: fraud pattern emerges → agent drafts rule → analyst reviews → rule deploys. The cycle shortens from weeks to hours.

This matters disproportionately for banks. Fraud is adversarial. The attackers are running their own inference loops — finding gaps in existing detection, exploiting new channels, adjusting tactics as soon as they get feedback that a pattern is being caught. A model that can only learn from historical labeled data is always fighting last month’s fraud. An agent that can identify unlabeled emerging patterns and propose new rules is at least trying to fight this month’s fraud.

The Snowflake data cloud underneath CommBank’s system is not incidental. The agent needs access to rich, real-time data across every channel simultaneously — card, digital, payments — with enough history to distinguish a genuine pattern from statistical noise. Snowflake’s architecture enables cross-channel joins at that scale without the data silos that would blind the agent to multi-channel fraud coordination. That data layer is load-bearing. Pull it out and the agent becomes a pattern detector with tunnel vision.

The Human-in-the-Loop Is Not Optional

The design choice that most enterprise teams will want to replicate carefully is the human approval gate on rule deployment. Every new detection rule proposed by the agent is reviewed and approved by CommBank’s fraud analytics team before going live. This is presented in CommBank’s announcement as an expected feature of responsible AI deployment. It is also, from a systems design perspective, the thing that keeps this architecture from becoming a liability.

Consider what a fraud detection rule actually is in production: it is a filter that intercepts transactions and triggers holds, blocks, or reviews. A rule that is too narrow misses fraud. A rule that is too broad blocks legitimate transactions, generates false positives, and degrades customer experience at scale — CommBank has 17 million retail customers and $1 billion in annual fraud safeguarding investment. A bad rule costs real money in real-time.

The agent proposes rules. Analysts with domain expertise, regulatory awareness, and accountability review them. This is not a bureaucratic checkpoint — it is the failure mode prevention layer. The agent can identify a pattern that looks like fraud but is actually a new payment behavior in a demographic segment the model hasn’t seen much of. An analyst can catch that. An automated deployment pipeline cannot.

The question every bank should be asking is: what does your human review workflow actually look like at the volume this generates? If the agent is updating 75% of card fraud rules, that is a continuous stream of rule proposals. If the review process is a single analyst reading a queue at 9 AM, you have created a new bottleneck and given it a fancy name. The human-in-the-loop only works if the loop has capacity.

The $1B Mortgage Fraud Backdrop

It is worth noting the context in which CommBank rolled out this agent. The bank is simultaneously managing a significant probe into approximately $1 billion in mortgage fraud — a different type of fraud, involving document manipulation and identity fabrication in the lending process rather than transaction-level card fraud. The agent in question targets card and payment fraud patterns, not mortgage origination.

The juxtaposition is instructive rather than ironic. Agentic AI excels at transaction-level pattern detection because the data is high-volume, structured, and real-time. Mortgage fraud, which involves manipulated documentation, false income statements, and synthetic identity construction over weeks-long origination processes, is a different detection problem entirely — one where document AI, identity verification, and multi-step workflow analysis matter more than real-time transaction scoring. CommBank’s fraud agent is a highly effective tool for one problem; it was never designed for the other. Conflating the two would be a category error.

The SuperML Take

The honest assessment of CommBank’s deployment is that it represents a genuine production shift in fraud AI architecture — not a incremental improvement on existing models, but a different approach to the detection-to-response pipeline. The 20% fraud loss reduction is a real number at a real bank, not a sandbox benchmark. That matters.

But the story the press release doesn’t tell is what it costs to operate this architecture reliably. Eighty million daily signals is not a light workload for pattern detection. Proposing rules at the rate implied by 75% coverage requires an inference infrastructure that can keep up with intraday fraud velocity. The Snowflake + cloud-native core banking stack CommBank is running was years in the making — the AI agent is sitting on top of a data architecture investment that most regional banks don’t have.

The replication risk is real. A bank that reads this announcement and decides to bolt an agentic rule-generation layer onto a legacy fraud stack will not get the same results. The data freshness, channel coverage, and cross-domain signal access that make the agent effective are properties of the data architecture, not the model. The agent is not magic; it’s a capable reasoning layer on top of an unusually well-instrumented data platform.

What should senior AI engineers and fraud technology leaders actually do with this? Three things. First, audit your data architecture before your model architecture — if your fraud signals are batched, siloed, or stale, an agentic layer won’t fix that. Second, think carefully about your human review workflow and its capacity: the agent creates a new kind of analyst workload, not less analyst work. Third, scope the agentic layer to the problem domain it can actually solve. CommBank’s agent is good at transaction pattern fraud. Synthetic identity, mortgage fraud, insider threat — these require different architectures and should not be assumed to follow from the same deployment.

The real risk in the next 12 months is not that banks won’t adopt agentic fraud AI — they will. The risk is that they’ll deploy the model without the data platform or the governance structure, measure results against legacy baselines, and conclude it doesn’t work. CommBank’s result is not about the agent. It’s about the ecosystem the agent sits inside.

Architecture Impact

What changes in system design? Adding an agentic rule-generation layer to fraud infrastructure requires real-time cross-channel data availability, not just batch transaction logs. The agent needs to observe patterns across card, digital, and payment channels simultaneously with sufficient historical depth to assess novelty — this forces data unification that most banks’ fraud stacks don’t have today. Rule proposal workflows also require a new integration surface between the ML inference layer and the rules engine, with versioning, audit trails, and rollback capability per rule.

What new failure mode appears? The primary new failure mode is rule proposal flooding — the agent surfaces so many candidate rules that the human review queue becomes a bottleneck, approvals get rubber-stamped without genuine scrutiny, and poorly-calibrated rules go live. This is the “automation ironically increases human error rate” failure pattern: by making rule creation fast, you create pressure to approve rules fast, which defeats the governance purpose of the human gate. The secondary failure mode is the agent finding spurious patterns in high-volume data (noise mistaken for signal) and proposing rules that generate false positive spikes before an analyst catches the problem.

What enterprise teams should evaluate:

- Fraud analytics team: Review queue capacity and workflow tooling — how many rule proposals per day can analysts genuinely evaluate, and what decision support do they need to assess a proposed rule’s false positive rate before approval?

- Data engineering team: Real-time cross-channel data freshness and latency — if any channel’s data is batched or delayed, the agent’s pattern detection is blind to coordinated multi-channel fraud

- Model risk / compliance team: Rule provenance and audit trail completeness — regulators will want to know who approved each rule, when, and based on what evidence; the agent’s proposed rules need to be treated as model outputs requiring model risk governance, not just operational procedures

Cost / latency / governance / reliability implications: Running continuous pattern detection on 80M+ daily signals requires dedicated inference capacity — at a typical transaction-level embedding and clustering cost, this is not a workload that fits on shared GPU infrastructure. Expect dedicated serving infrastructure and 24/7 uptime SLAs, with rule deployment latency measured in hours (inference → human review → approval → deployment) rather than the milliseconds of the detection layer. From a governance perspective, each agent-generated rule is a model output and should carry the same documentation, approval, and monitoring requirements as any other production model under SR 11-7 / SR 26-2 guidance — something most banks’ model risk frameworks are not currently calibrated for at this rule generation velocity.

What to Watch

The CommBank deployment will prompt most major banks to accelerate agentic fraud pilots already in their AI roadmaps. Watch for: how rule-generation agents interact with existing rules engines (most are FICO, Actimize, or in-house logic layers not designed for AI-generated rule injection); whether regulators begin requiring audit trails on agent-proposed fraud rules as model outputs under model risk guidance; and how the vendor landscape responds — Featurespace, Sardine, and Sift will likely announce similar agentic rule-generation capabilities within six months. The real competitive signal will come from banks that publish false positive rates alongside fraud loss reductions. Fraud loss reduction without false positive data is an incomplete story.

Sources

- CommBank develops AI agent that spots new fraud and helps build defences — Commonwealth Bank newsroom, April 24 2026

- CommBank builds AI agent that spots fraud and helps build defences — Finextra

- CommBank rolls out AI agent as it probes $1bn mortgage fraud — Mortgage Professional Australia

- CommBank deploys agentic AI to spot scams as $1bn mortgage probe unfolds — El Balad

- AI Fraud Detection in Banking: The Complete 2026 Guide — Emburse

- AI is helping banks save millions by transforming payment fraud prevention — Mastercard

Try The Lab

Run the hands-on demo aligned with this architecture: