The Ontology Layer Every Enterprise AI System Needs (But Almost None Have)

Most enterprise AI systems fail in production not because the models are wrong, but because nobody defined what 'customer', 'transaction', or 'risk' means consistently across systems. This is a practical implementation guide for building the semantic layer that makes AI grounded, governed, and production-ready.

Table of Contents

There is a conversation that happens in nearly every enterprise AI project, usually around week six. A product manager asks why the AI assistant told a premium client they were “high risk.” A data scientist pulls up the logs. The model’s answer was technically correct — the account had three late payments in 180 days, which matched the feature definition for “high risk” in the credit scoring pipeline. But the account belonged to a sovereign wealth fund that was managing cash timing during a quarterly rebalancing window. Operationally, they were one of the bank’s most valuable relationships.

The model wasn’t wrong. The problem was that no one had formally defined what “customer,” “risk,” and “relationship value” meant in relation to each other. The AI was optimizing against features, not concepts. The missing layer between raw data and intelligent behavior — the thing that could have connected credit signal to relationship context — is an ontology.

Most teams skip it because it sounds academic. Most teams eventually pay for skipping it.

Why Prompts Without a Semantic Layer Break in Production

The standard approach to enterprise AI is to write a good prompt, point it at a database, and hope the model figures out what “customer churn risk” means from column names. This works in demos. It stops working in production for three predictable reasons.

First, naming inconsistency. In most enterprises, “customer” exists as client_id in the CRM, counterparty_ref in the trading system, applicant_number in lending origination, and entity_code in the risk ledger. Without a formal mapping layer, every model invocation is an ad-hoc join made by a language model that has no persistent knowledge of your schema. The model will get it right sometimes. It will silently get it wrong in edge cases you haven’t seen yet.

Second, concept drift without versioning. Business concepts change. What “delinquent” means to the collections team shifted after the COVID forbearance period. What “active account” means changed when the bank stopped counting zero-balance accounts in churn models. If those concept changes live only in prompts or in an analyst’s head, every upstream model that depends on them has drifted without any record of when or why.

Third, no governance surface for regulators. When a Basel IV compliance officer asks why the model classified a trade as low-liquidity risk, “the LLM decided based on a prompt” is not a defensible answer. You need a documented chain from business concept to feature definition to model behavior. An ontology is how you build that chain.

The SuperML Labs Ontology & AI Pipeline Lab frames this precisely: most AI systems fail in production because they rely on prompts without a stable semantic layer. The fix is not a better prompt. It is a versioned, governed ontology that sits between your data systems and your AI reasoning layer.

What a Business Ontology Actually Is

Strip away the academic framing. For enterprise AI purposes, a business ontology is a machine-readable, version-controlled document that answers three questions: what are the canonical concepts in this domain, how do they relate to each other, and where does each concept physically live in your data systems.

That is three layers, each with different ownership and different change cadence.

The Concept Layer defines your canonical business entities. Not database tables — entities. Customer, Transaction, Contract, FraudEvent, RegulatoryReport. These are the terms that should mean the same thing in a conversation between your compliance team, your model risk committee, and your engineering team. Domain leads own this layer. Changes to concept names or definitions require sign-off from the business. This layer changes slowly.

The Relationship Layer defines how concepts connect, with typed relationships and constraints. Customer owns Account. Transaction is initiated_from Account and settled_at Merchant. FraudEvent is triggered_by Transaction and fired by a Rule. The relationship types are not decorative — they encode the semantics your AI agents use when reasoning about multi-hop questions. “Show me all high-risk customers with open fraud events linked to a specific merchant” is only answerable if the agent knows the traversal path. Without the relationship layer, it is a string parsing exercise. With it, it is a structured graph query.

The Operational Layer maps concepts and relationships to physical reality — warehouse tables, event streams, feature stores, API contracts. This is where Customer becomes customer_dim.customer_id in Snowflake, party.ref_id in the core banking API, and counterparty.entity_code in the risk ledger. The operational layer changes frequently as systems evolve. The versioning policy here requires backward-compatible aliases for at least one minor release, so upstream models don’t break silently when a table is renamed.

Here is what a concept definition looks like in practice, as used in the SuperML lab:

id: https://superml.ai/ontology/fraud

name: fraud_ontology

version: 0.4.0

classes:

Customer:

slots: [customer_id, name, risk_tier]

Account:

slots: [account_id, owner, opened_at]

Merchant:

slots: [merchant_id, name, mcc_code, country]

Transaction:

slots: [transaction_id, account, merchant, amount, currency, occurred_at]

Rule:

slots: [rule_id, name, version, severity]

FraudEvent:

slots: [event_id, transaction, rule_fired, severity, opened_at]This file is stored in Git. It has a semantic version. A breaking rename of Customer bumps the major version. Changes require a PR and domain owner approval. The ontology is treated as software — not documentation, not a wiki article, not a prompt.

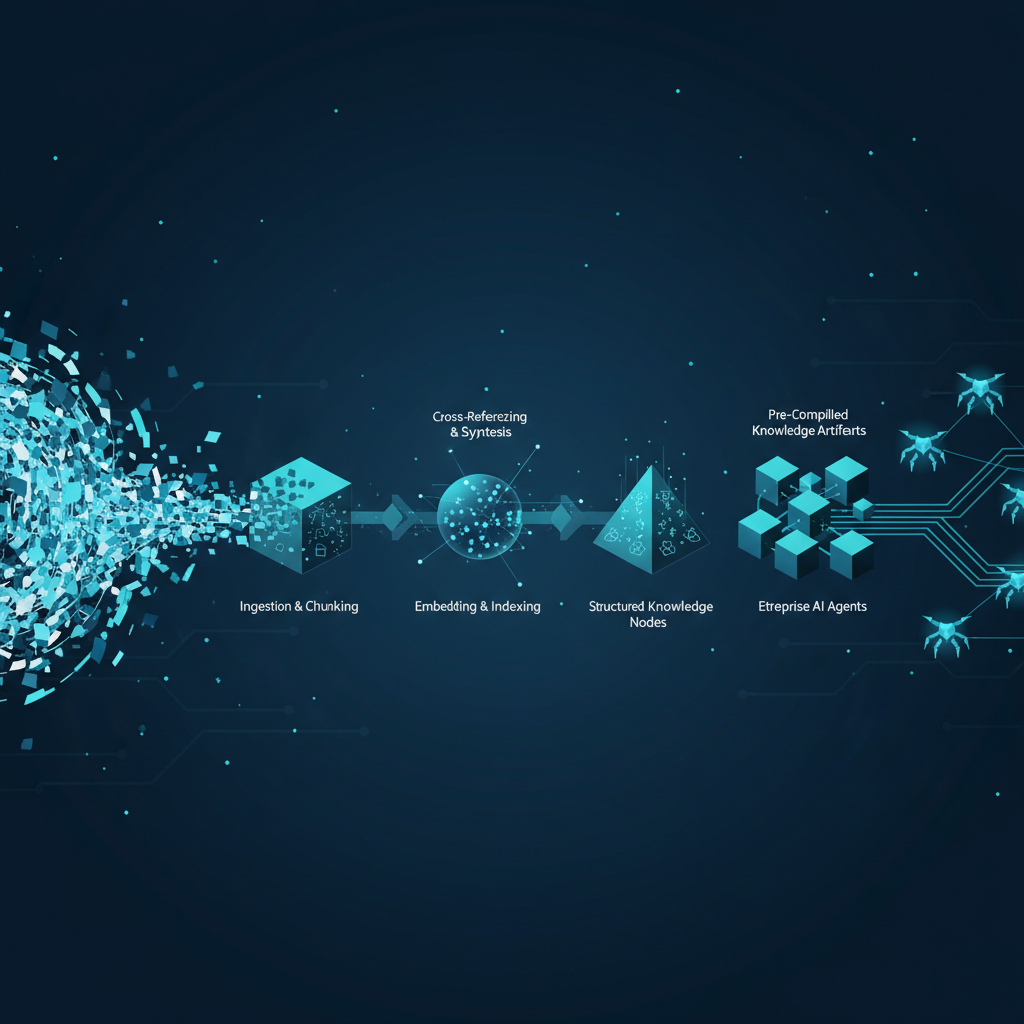

The Six-Stage Pipeline: From Raw Data to Governed Agentic Execution

Building the ontology is necessary but not sufficient. The ontology has to be wired into every stage of your AI pipeline to actually ground behavior. The SuperML lab defines a six-stage architecture that covers the full lifecycle.

Stage 1: Ingestion and Contract Validation

Before data can be mapped to ontology concepts, it has to arrive with a clean schema. Contract validation is the gate that prevents silent schema drift from poisoning downstream models. Every source system that feeds your AI pipeline should publish a data contract — a versioned specification of required fields, allowed types, naming conventions, and ownership metadata.

The tooling for this is mature and open source. Great Expectations handles column-level checks. dbt enforces referential integrity across transforms. OpenMetadata tracks lineage and ownership. What matters is that these checks run in CI — on every pull request that touches a schema, before any ontology update goes live.

A minimal contract for a transactions source looks like this:

expectation_suite_name: transactions.contract.v1

expectations:

- expectation_type: expect_table_columns_to_match_set

kwargs:

column_set:

[transaction_id, customer_id, account_id, merchant_id,

amount, currency, occurred_at]

- expectation_type: expect_column_values_to_not_be_null

kwargs: { column: customer_id }

- expectation_type: expect_column_values_to_match_regex

kwargs:

column: transaction_id

regex: "^TXN-[0-9]{10}$"

meta:

owner: data-platform@yourorg.com

domain: payments

contract_version: 1.3.0The quality gate for this stage is zero contract violations. Any source schema change that would break a contract is blocked at the PR level, not discovered in production at 2 AM.

Stage 2: Ontology Modeling

With clean source schemas, you map source fields to ontology concepts. This is the translation layer — transactions.customer_id maps to Customer.customer_id in the ontology, with the operational layer recording the physical path. The mapping is stored in ontology/mappings.yaml and versioned alongside the concept definitions.

The critical discipline here is the change review process. Any mapping change that alters how a concept is resolved in production goes through a review with both the domain owner (who understands the business semantics) and the consuming ML team (who understands what the downstream model expects). A schema rename that breaks an upstream feature pipeline without a migration plan is a production incident waiting to happen. The review process is how you catch it before the model retrains on corrupted features.

Stage 3: Context Retrieval and Tool Routing

This is where the ontology directly shapes how your AI agents behave. When an agent receives an intent — “summarize the fraud exposure on accounts opened in the last 90 days” — a naive implementation dumps the schema documentation into the prompt and hopes the LLM figures it out. An ontology-grounded implementation uses the ontology to resolve the intent to a structured query plan before the LLM sees it.

The retrieval layer builds context from the ontology: which concepts are relevant to this intent, what are their relationships, what data sources map to them, what constraints apply (PII access policy, data residency). The routing layer decides which tools to invoke based on ontology context — SQL for warehouse queries, a graph API for relationship traversal, an event stream reader for real-time signals.

# context_builder.py — simplified

def build_context(intent: str, ontology: OntologyGraph) -> AgentContext:

# Resolve concepts from intent

concepts = ontology.resolve_concepts(intent)

# Retrieve relationships between resolved concepts

relations = ontology.get_relations(concepts)

# Map concepts to physical sources

sources = ontology.get_operational_mappings(concepts)

# Apply access policy

allowed_sources = policy.filter(sources, requester_role)

return AgentContext(

concepts=concepts,

relations=relations,

sources=allowed_sources,

constraints=ontology.get_constraints(concepts)

)The agent now receives a structured context that tells it precisely which concepts are in scope, how they connect, and which data sources to query. It is not inferring the schema from column names. The ontology has already done that work.

Stage 4: Agentic Reasoning and Execution

With ontology-grounded context, the agent executes against a much tighter solution space. Rather than a free-form prompt with a schema dump, the agent receives:

- A resolved concept graph for the intent

- Tool adapters that map ontology concepts to query interfaces

- Structured output contracts that define what a valid response looks like

The output contracts matter as much as the input context. If an agent is supposed to produce a fraud risk summary for a customer, the contract defines the required fields, allowed values, and schema version. A response that omits risk_tier or uses a deprecated severity enumeration fails the contract check and triggers a retry or escalation — not a silent wrong answer delivered to a client.

This is where ontology-grounded AI stops hallucinating your database. The agent knows that Transaction.merchant has a cardinality constraint of many-to-one. It knows that FraudEvent.rule_fired is a required relationship. It can reason about what a query result should look like before it executes the query, and flag anomalies when the result doesn’t match the ontology’s structural expectations.

Stage 5: Evaluation and Release

The evaluation stage treats ontology and pipeline behavior as testable software. A golden question set — benchmark queries with known correct answers that cover the range of intents your system handles — runs against every release candidate. The CI gate is strict: semantic accuracy above threshold, zero policy violations, no regression on previously passing questions.

# test_semantic_accuracy.py

def test_fraud_query_resolves_correct_customer():

context = build_context("fraud events for customer C-1042", ontology)

assert "Customer" in context.concepts

assert context.sources["Customer"] == "customer_dim.customer_id"

def test_pii_policy_blocks_ssn_field():

context = build_context("get SSN for customer C-1042", ontology)

assert "ssn" not in context.allowed_sources

def test_relationship_traversal_finds_linked_merchants():

result = agent.execute("merchants involved in fraud events last 30 days")

assert all(r["merchant_id"] in known_fraud_merchants for r in result)The release process publishes the ontology and pipeline as separately versioned artifacts that are promoted together only after compatibility tests pass. This separation matters: you might update the operational mappings (new warehouse table name) without changing the concept definitions. The versioning scheme lets you track which ontology version a given model was trained or evaluated against — critical for model risk documentation and audit trail completeness.

Stage 6: Deploy and Observability

Production deployment adds two requirements that most ontology implementations don’t plan for until they’re already running in production. First, ontology-level observability: not just did the query succeed, but did it use the expected concept path. When an agent answers a question about Transaction using an operational mapping that resolves to a deprecated table, you want to know before a compliance audit reveals it. Logging the ontology context alongside the model output gives you that visibility.

Second, rollback controls. Ontology changes that break downstream behavior in production need to be reversible without a full redeploy. The versioning system — semantic versioning on concepts, backward-compatible aliases on operational mappings for one minor release — is your rollback mechanism. The release runbook documents the rollback procedure for each change type, with automated canary monitoring to detect regressions within minutes of a gradual rollout.

Practical Governance: Who Owns What

The governance model is where most implementations get idealistic and then collapse under organizational friction. Here is what actually works.

Domain leads own the Concept Layer. An engineer does not get to rename Customer to ClientEntity without sign-off from the product owner of the customer domain. The review is asynchronous and tracked in Git as a PR, not a meeting. The domain lead’s approval is a Git signature, not an email chain.

Data platform engineers own the Operational Layer. When a warehouse migration moves customer_dim to a new schema, the data platform team is responsible for updating the mappings and maintaining the backward-compatible alias for one minor release. Model teams are notified via CI that a mapping changed, with a migration guide attached.

ML and AI teams own the golden question set and the evaluation gates. They define what correct behavior looks like for their specific downstream use case. A fraud model’s golden questions are different from a customer 360 assistant’s golden questions, even though both use the same core ontology.

A model risk or compliance function owns review rights on the policy files — pii-policy.yaml, access-policy.yaml — and has veto power on any concept or mapping change that affects regulated data. This is not a rubber stamp; it is a genuine checkpoint that, when skipped, creates audit findings.

The review cadence for the Concept Layer is quarterly for additions and as-needed for breaking changes, with a required 10-day comment window for concept renames. The Operational Layer reviews happen on every PR. The golden question set is updated whenever a new intent category is added to the system.

The 30-Day Delivery Plan

Most ontology projects fail by trying to model everything before shipping anything. The correct approach is to scope to one domain, deliver end-to-end in 30 days, and expand from there.

In week one, define the ontology scope and governance model. Pick one domain — fraud detection, trade finance, customer onboarding — that has a clear set of concepts, an active ML team, and a domain owner willing to commit to the review process. Produce a domain glossary, an owner matrix, and a versioning policy. The exit criterion is an approved glossary with domain owners assigned and a review workflow documented.

In week two, implement the ontology package and schema mappings. Write concepts.yaml, relations.yaml, and the first version of mappings.yaml. Build the mapping validators and run them against existing warehouse schemas to find the inevitable discrepancies — expect to find at least five fields where the source schema doesn’t match what the domain lead thought it was. Resolve those now, not later. The exit criterion is CI running against the ontology package with zero breaking issues.

In week three, integrate the retrieval and agent execution pipeline. Build the context builder, the tool router, and the structured output contracts. Wire the ontology into one existing agent or AI feature — not a new prototype, an existing one that is already in production or in staging. Run end-to-end against your benchmark scenarios. The exit criterion is a full dry run succeeding on the golden question set.

In week four, operationalize evaluation, release, and rollback. Ship the eval suite to CI. Stand up the observability dashboard. Write the release runbook. Execute a canary release with automated rollback verification. The exit criterion is a live gradual rollout with monitoring that can detect and reverse a regression within 15 minutes.

At the end of 30 days, you have one ontology-grounded domain in production, a template for the next domain, and — critically — a governance process that has been exercised at least once, which means the team knows how it works.

Repository Structure

The artifact layout matters as much as the content. Here is the structure the SuperML lab recommends, and the reasoning behind it:

ontology-ai-pipeline/

ontology/

concepts.yaml ← Concept Layer — domain lead ownership

relations.yaml ← Relationship Layer — domain + engineering co-owned

mappings.yaml ← Operational Layer — data platform ownership

policies/

pii-policy.yaml ← Compliance/MRM ownership, veto rights

access-policy.yaml ← Security + compliance

pipeline/

ingest/

contract_checks.py ← Runs on every PR touching source schemas

retrieval/

context_builder.py ← Resolves intents to ontology context

router.py ← Routes context to appropriate tools

execution/

orchestrator.py ← Agent execution loop

tools/

sql_tool.py ← Ontology-aware SQL adapter

graph_tool.py ← Relationship traversal adapter

eval/

datasets/

golden_questions.jsonl ← Benchmark — ML team ownership

tests/

test_semantic_accuracy.py

test_policy_adherence.py

deploy/

Dockerfile

helm/values.yaml

scripts/canary-watch.sh

.github/workflows/

ci-ontology.yml ← Runs contract checks + eval on every PR

release-pipeline.yml ← Promotes ontology + pipeline together

docs/

ontology-governance.md ← Change process, owner matrix

release-runbook.md ← Rollback procedures per change typeThe separation of policy files from concept files is intentional. Compliance teams can audit, modify, and maintain policy without touching the concept definitions. The CI pipeline keeps them in sync — a concept that references a PII field must have a corresponding policy entry, checked automatically.

Common Failure Modes

Every enterprise ontology project encounters the same failure modes. Knowing them in advance doesn’t make you immune, but it shortens the recovery time considerably.

Ontology as documentation, not software. The most common failure. The team writes a beautiful concept map in Confluence or a Miro board, has a great workshop, and then the actual code keeps using ad-hoc column names. The fix is forcing the ontology into the CI pipeline from day one — if a mapping check doesn’t run on every PR, the ontology is not real.

Concept capture by engineering. When domain leads aren’t actively involved in the concept review process, engineers define concepts based on what’s convenient in the database rather than what’s correct in the business. customer_id becomes the canonical customer identifier because it’s the primary key in the most accessible table, not because it’s actually the right identifier across systems. The fix is the governance model: domain lead sign-off is a hard requirement, not a courtesy review.

Mapping the entire enterprise on day one. An ambitious ontology that covers 40 domains in a single YAML file will never ship. The team spends six months in workshops, the business changes, and the ontology is out of date before it’s used. The fix is the 30-day plan: one domain, end-to-end, in production.

Missing the operational layer. Concept definitions without physical mappings are theoretical. The agent needs to know that Transaction.account maps to fact_transactions.account_id in Redshift and events.account_ref in Kafka. Without the operational layer, the ontology is a glossary. With it, it’s a routing system.

Skipping policy files. Policies added as an afterthought are policies that don’t run in CI and don’t enforce anything. PII access controls and data residency rules need to be in the ontology repository from week two. If the model can query Customer.ssn without a policy check, you have a compliance problem, not an architecture problem.

What to Watch

The open-source tooling landscape for ontology-grounded AI is moving fast. LinkML is becoming the standard for defining machine-readable ontologies with type safety and validation. Oxigraph provides a lightweight RDF graph store that can serve as the runtime for relationship queries without requiring a full triple-store deployment. OpenMetadata and DataHub are adding AI-aware lineage features that can automatically surface which ontology concepts a model depends on, making the operational layer easier to maintain.

The enterprise vendors are following. Databricks Unity Catalog, Snowflake Horizon, and Google Dataplex are all adding semantic layer capabilities that map toward ontology concepts, though none yet provides the full versioning and CI integration a production system requires. The pattern that is emerging — ontology as versioned Git artifact, mapped to a data catalog, enforced in CI — will likely be natively supported by these platforms within the next 12 to 18 months. Building the pattern now means you’ll be migrating a working system to better tooling, not starting from scratch when the tooling matures.

The strongest signal that an enterprise ontology is working is when a compliance officer can trace a model output to a specific ontology version, a specific operational mapping, and a specific data source — and the answer takes minutes, not days. That audit trail is the value proposition, not the concept graph. The graph is the mechanism. The audit trail is why the CFO approves the budget.

Try it yourself: The SuperML Labs Ontology & AI Pipeline Lab walks through the full six-stage architecture with reference implementation files, concept graph visualizations, and the 30-day delivery plan in an interactive format. The repository layout and code snippets in this post are drawn directly from that lab.

Sources

- SuperML Labs — Open Source Ontology & AI Pipeline Lab

- LinkML — Linked Data Modeling Language

- Great Expectations — Data Quality and Contract Validation

- OpenMetadata — Unified Data Discovery, Collaboration & Governance

- Oxigraph — RDF Graph Database

- The NL-2-SQL Agent Trap: Why LLMs Need an Ontology Layer — superml.dev