The 85% Problem: Agentic AI Has Outrun the Data Infrastructure It Needs to Survive Production

Fivetran's 2026 Agentic AI Readiness Index reveals that 85% of enterprises are running agent workloads on data foundations that aren't ready — and in banking, where finance teams grew agentic AI adoption 600% year-over-year, the gap between deployment and data readiness is now a model risk surface.

Table of Contents

There’s a version of the enterprise AI story where all the hard problems have been solved. The model is good. The agent orchestration is dialed in. The governance framework is on paper. And then the agent calls a data pipeline that was last refreshed six hours ago, reasons confidently over stale customer account state, and takes a consequential action that no one in the building can explain.

That’s the story Fivetran’s 2026 Agentic AI Readiness Index is actually telling, even though the press release framing made it sound like a vendor pitch. Survey 400 data professionals across the US, UK, EMEA, and Asia-Pacific — people who build and operate the data infrastructure that AI runs on — and ask them whether their organizations are ready to support agentic AI in production. Only 15% say yes. The rest are running agents, or planning to, on data foundations that by their own assessment are not fit for the purpose.

This is not a pilot problem. Forty-one percent of organizations in the survey are already using agentic AI in production. They didn’t wait for readiness. They shipped anyway.

In banking, that gap is more compressed and more consequential than in almost any other vertical. Finance teams grew agentic AI adoption from roughly 7% in January 2025 to 44% in Q1 2026 — a 600% year-over-year increase that is, by almost any measure, a deployment sprint. The data infrastructure question got deferred. Now it’s a production reality.

Why Data Readiness Is the Wrong Framing for the Right Problem

The index measures organizations on four dimensions: data freshness, lineage, governance, and interoperability. It gives an average readiness score of 61–62% across respondents — meaning that even among companies that think they’re doing reasonably well, they’re still failing on roughly four out of ten criteria that production agent workloads depend on.

The problem with calling this a “readiness” issue is that it implies a preparation step that happens before deployment. That’s not what’s occurring. The 41% who are already in production didn’t have a readiness ceremony and get cleared. They deployed agents on top of whatever data infrastructure they had, because the business pressure to ship was louder than the engineering pressure to fix.

The top barriers cited in the index are instructive. Data quality and lineage tops the list at 42% — not “our model is too slow” or “our orchestration layer has bugs.” Regulatory compliance and sovereignty comes in at 39%. Security and privacy at 39%. These are not model problems. They are data engineering problems that the industry has been nominally working on since the data lake era and that still aren’t solved for the more demanding workloads that agents represent.

A traditional analytics pipeline can tolerate T-1 data. A dashboard refreshed yesterday morning doesn’t matter much to a BI analyst pulling a weekly report. An AI agent that makes a credit decision, triggers a transaction monitoring alert, or routes a customer escalation based on T-1 data is a different situation entirely. The latency requirements are different, the lineage requirements are different, and the governance implications are fundamentally different because the agent acts — it doesn’t just display.

The Finance and Banking Data Exposure

The 600% growth number in finance AI adoption deserves more attention than it’s getting, because it’s happening in a sector where data latency and lineage aren’t best practices — they’re regulatory obligations.

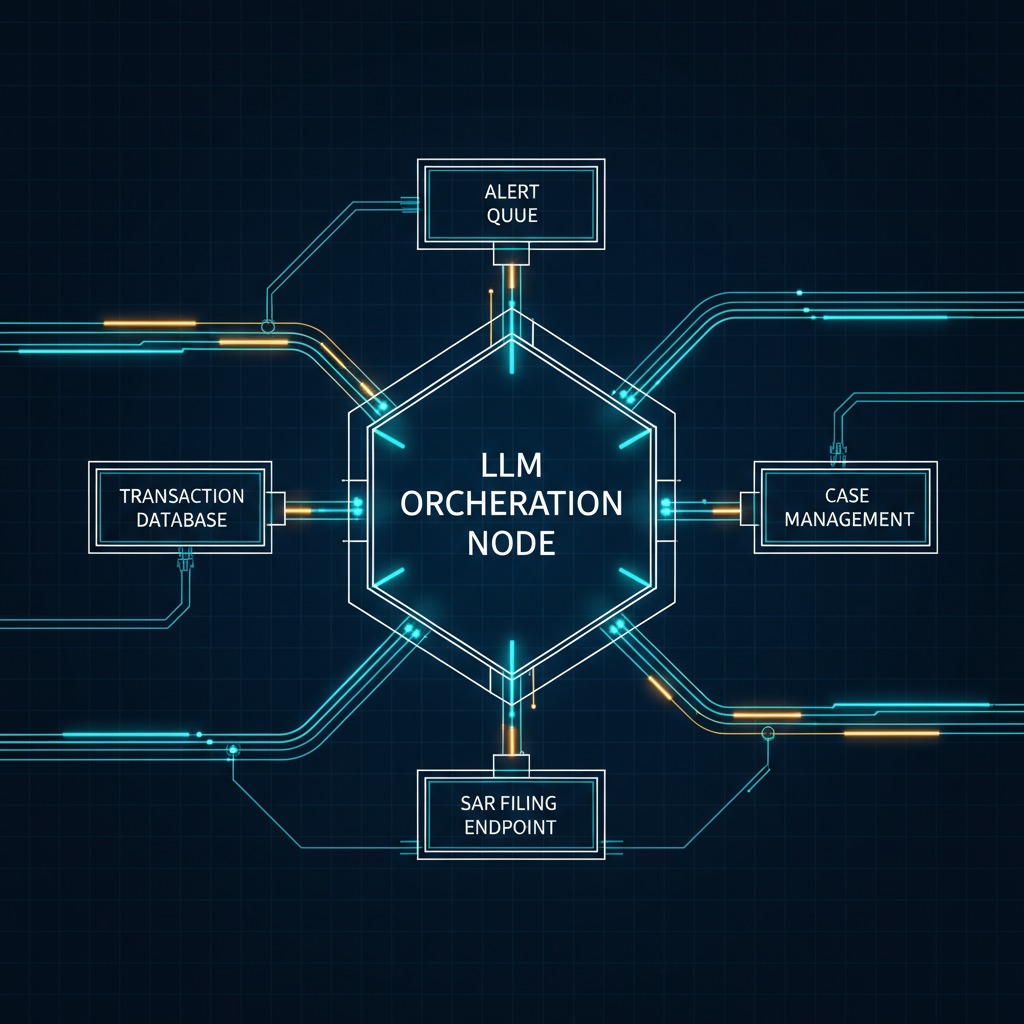

Consider what an AML monitoring agent is actually doing when it investigates a transaction alert. It’s pulling customer account history, transaction velocity, correspondent banking relationships, watchlist status, and behavioral baselines. If any of those data sources are stale — if the account state the agent sees is an hour old, or the watchlist check is running against a batch update from this morning, or the transaction history has a replication lag that the agent doesn’t know about — the agent’s investigation is compromised. The conclusion it reaches, and the SAR it may or may not file, reflects a reality that no longer exists.

That’s not a data quality incident in the traditional sense. That’s a regulatory exposure. The OCC and Federal Reserve expect that suspicious activity reports reflect the best available information at the time of filing. If an agent files or suppresses a SAR based on stale data, the audit trail question isn’t “why did your model make that prediction?” It’s “what data did the agent actually see, and was it current?”

The lineage dimension of the Fivetran index is particularly sharp here. End-to-end data lineage — the ability to trace exactly what data state existed at the moment an agent made a decision — is what makes agent decisions auditable. Without it, banks have agents operating in production with no mechanism to reconstruct what the agent knew at the time. That’s the kind of gap that turns a routine model validation review into a remediation order.

Credit agents face a similar structural problem. A lending agent that approves or declines a credit application needs to operate on account data, bureau data, and income verification that reflects the applicant’s current state, not a batch-refreshed snapshot from the previous night. The 39% compliance barrier in the readiness index isn’t abstract — it shows up as exactly this kind of use case where the gap between pipeline freshness and agent decision timing is the failure mode.

What Ready Organizations Actually Look Like

The 15% that the index considers fully prepared share three characteristics that are worth examining, because they’re less impressive than you might expect. They run always-on, automated data pipelines that keep information fresh and reliable. They enforce end-to-end lineage and governance. And they standardize on interoperable architectures that allow data to move across the infrastructure.

These are not frontier capabilities. Always-on pipelines are streaming infrastructure — Kafka, Flink, or their managed equivalents — that replaces batch ETL for high-priority data domains. End-to-end lineage is OpenLineage plus whatever catalog layer sits on top of it. Interoperability is avoiding vendor-specific data formats and APIs in critical pipelines. None of this is novel. The tooling has been production-ready for years.

What the 15% did differently is prioritize the data infrastructure investment alongside or before the agent deployment, rather than treating data engineering as a follow-on project. That sequencing difference is what separates organizations where agents work reliably in production from organizations where agents work in demos and produce intermittent, unexplainable failures in production.

The interesting footnote is Fivetran’s acquisition of stewardship over the Great Expectations open-source community, announced on May 13, 2026. Great Expectations is a data quality validation framework that has been around since 2019 — and by taking over its governance, Fivetran is signaling that data quality validation is becoming a prerequisite for agent deployments, not a nice-to-have for analytics pipelines. When a data pipeline company absorbs the leading open-source data quality tool one week after publishing an index showing 85% of enterprises have data quality problems, the strategic intent is not subtle.

The SuperML Take

The framing of the Fivetran index as a “readiness gap” is technically accurate but strategically incomplete. The more important observation is that for the enterprise AI stack — model, orchestration, runtime governance, data — the data layer has been the most consistently underinvested, and it’s the layer that fails in ways that are hardest to detect and most consequential to act on.

Model failures tend to be visible. The model returns a wrong answer, a hallucination, a score that’s obviously off. The failure mode is legible to the people who built the system. Data failures in production agent workloads are frequently invisible. The agent doesn’t know that the account balance it’s reasoning about is six hours stale. The orchestration layer doesn’t know that the watchlist check ran against yesterday’s batch. The monitoring dashboard shows normal completion rates and normal latency. The failure only surfaces when someone traces a bad decision back through the audit trail and discovers that the data state the agent saw was different from the data state that was required for the decision to be correct.

That’s the 85% problem in its most dangerous form: not a broken system, but a system that looks healthy while producing results that are subtly, consistently wrong.

For enterprise AI teams building on top of agents in 2026, the production readiness question has shifted from “is the model good enough?” to “can we guarantee what data state the agent saw at decision time, and can we prove it to a regulator?” The second question requires a different investment profile — more streaming infrastructure, more lineage tooling, more data governance process — than the one most organizations have been making.

In banking specifically, the gap between the 41% who have deployed and the 15% who are fully ready is where the next wave of model risk management activity will land. Model risk teams at large banks have spent two years focused on SR 26-2 compliance, on validating LLM outputs, on building human-in-the-loop approval chains. The data infrastructure underneath those agents has been in the validation backlog. With 600% adoption growth in one year, it’s moving to the front of the queue whether the teams are ready or not.

The vendors who understand this are already positioning. Fivetran acquiring Great Expectations stewardship is the clearest signal yet. Databricks has been expanding Unity Catalog with agent-specific lineage features. Alation and Atlan both updated their catalog integrations to capture agent query context in the first half of 2026. The market has priced in that agents need better data infrastructure. The enterprises deploying those agents are still catching up.

Architecture Impact

What changes in system design? Agentic AI deployments require a data pipeline tier that operates at fundamentally different SLAs than analytics pipelines. Batch ETL that refreshes hourly or nightly is acceptable for BI workloads but creates a data-timeliness failure mode for agents that make decisions and take actions in real time. The architecture shift is from analytics-optimized pipelines (high throughput, acceptable latency) to agent-optimized pipelines (sub-minute freshness, guaranteed lineage, interoperability across data domains). This requires streaming infrastructure for high-priority data domains and a lineage capture mechanism that records the exact data snapshot each agent call consumed.

What new failure mode appears? Silent data drift — where an agent executes confidently on stale or incomplete data with no signal to the orchestration layer, monitoring system, or human supervisor that the underlying data context has degraded. Unlike model failures, which produce anomalous outputs, data-timeliness failures produce outputs that look normal and fall within expected ranges while being based on a data state that no longer reflects reality. In financial services, this is a compliance failure mode before it’s a quality failure mode.

What enterprise teams should evaluate:

- Data engineering teams: Audit which data domains are consumed by production agents and map their current pipeline refresh rates against the decision latency of the agent workloads consuming them. Any domain with batch refresh that feeds a real-time agent action is a silent failure candidate.

- ML platform and MLOps teams: Evaluate whether current lineage tooling captures agent-time data snapshots, not just training-time data provenance. Agent decisions need point-in-time lineage, not lineage from the model build.

- Model risk and compliance teams (banking/finance): Assess whether current MRM frameworks have a data-freshness gate for agent deployments. SR 26-2 covers model inputs broadly, but explicit data-timeliness validation for agent workloads is absent from most validation frameworks — and regulators are starting to ask about it.

- Security and data governance teams: Evaluate data access patterns for agents — which agents are reading which data domains, under what identity, and with what freshness guarantees. Agentic AI substantially expands the blast radius of a data governance failure.

Cost / latency / governance / reliability implications: Upgrading analytics pipelines to agent-grade freshness (sub-minute, always-on) typically costs 3–5x more to operate than the batch pipelines they replace, driven primarily by streaming compute and storage write amplification. The lineage overhead for point-in-time agent snapshot capture adds roughly 10–20% to pipeline operating costs depending on data volume. In regulated environments, the governance cost of not doing this — audit remediation, regulatory inquiry response, potential enforcement action — is difficult to quantify upfront but easily exceeds the infrastructure investment. The 39% of organizations citing regulatory compliance as a top barrier have already implicitly estimated this tradeoff and decided to defer it. Production failure events tend to reverse that calculation quickly.

What to Watch

The Fivetran index establishes a baseline that will be measured against outcomes in H2 2026. Watch for production failures in enterprise agent deployments that trace back to data-timeliness issues rather than model failures — these will be the first empirical evidence that the 85% gap is a real operational risk, not just a survey finding.

Regulators in the OCC, Federal Reserve, and EU supervisory bodies have been building agent-specific examination guidance throughout 2026. The gap between the existing SR 26-2 framework and what’s needed for real-time agent workloads — specifically around data input validation and point-in-time lineage — is one of the cleaner areas where new guidance is likely to land.

Fivetran’s stewardship of the Great Expectations open-source community positions them to define what “data quality for agents” means at a specification level. If they ship a formal agent-data validation specification that gets adopted by Databricks, Snowflake, and the major cloud data platforms, the readiness gap becomes auditable infrastructure rather than survey self-assessment. That’s a significant shift.

On the tooling side, watch for streaming pipeline products that bake in agent-specific features: point-in-time snapshot APIs, freshness SLA monitoring with agent-aware alerting, and lineage capture that distinguishes agent read patterns from analytics query patterns. Several startups in the data engineering space are building specifically for this workload in mid-2026, and the major platforms are adding capabilities at the catalog and governance layer.

Sources

- Fivetran 2026 Agentic AI Readiness Index — Official Report

- 85% of enterprises are running agentic AI on a data foundation that isn’t ready — Fivetran Blog

- Fivetran Launches 2026 Agentic AI Readiness Index — BusinessWire

- Agentic AI Pushes Banks to Fix Security, Data and Decision Rights — PYMNTS

- Banks Shift AI From Chatbots to Autonomous Money Movement — PYMNTS

- Fivetran to Become Steward of Great Expectations Open Source Community — BusinessWire

- Agentic AI Statistics 2026: 150+ Data Points Collection — Digital Applied

- Banking in 2026: Production Scale AI Agents — Fintech Futures

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.