The $40,000 Benchmark: When AI Evals Cost More Than Training, Enterprise Quality Gates Break

AI evaluation has crossed a cost threshold that fundamentally changes who can afford to verify what they're deploying. The Holistic Agent Leaderboard spent $40K on a single benchmark sweep — and that number reveals a structural crack in how enterprise teams govern production AI.

Table of Contents

Here is the thing nobody tells you when you greenlight an AI agent for production: the leaderboard number you bought on was probably generated from a single run, with a single seed, using a scaffold that costs 9× more than the one your vendor will actually sell you. And even that number — which the team presenting the demo called “state of the art” — represents nothing about how the agent behaves under 8 repetitions, across different input distributions, or when the tool-call environment throws a 40% error rate at it the way enterprise environments actually do.

The EvalEval Coalition published a detailed accounting of AI evaluation costs in late April 2026, and the numbers should be pinned to every AI architecture review board’s wall. The Holistic Agent Leaderboard (HAL) — which is the most comprehensive standardized agent evaluation available — spent $40,000 running 21,730 rollouts across nine models and nine benchmarks. A single run of GAIA on a frontier model costs $2,829. A credible PaperBench evaluation runs $9,500. And if you want statistical reliability — meaning you run each cell eight times to understand variance, not just accuracy — the $40,000 aggregate becomes $320,000.

Most enterprise teams have allocated zero of that to their quality gate.

The Static Benchmark Era Made Everyone Complacent

For years, AI evaluation looked cheap. The standard mental model was: training is expensive, inference is cheap-ish, and evaluation is basically free. You ran MMLU, computed a percentage, and called it state of the art. The entire field developed a false intuition about what verifying AI systems actually costs.

That intuition was already wrong before agents arrived. When Stanford released HELM in 2022, the per-model cost ran from $85 for a small model to $10,926 for AI21’s J1-Jumbo — and the full 30-model sweep cost roughly $100,000. IBM Research noted that running a single 13B model through HELM consumed up to 1,000 GPU hours. And that was for static question-answering, not for agents that make dozens of tool calls per task.

The saving grace was that static benchmarks could be compressed. A 2024 paper showed that running 100 anchor items from MMLU’s 14,000-item set preserved rankings at about 2% error. Flash-HELM cut compute by 100–200× without meaningfully changing the ordering. Academic groups got comfortable with the idea that evaluation was something you could approximate cheaply and still get a meaningful answer.

Agents broke that assumption completely.

The Agent Eval Doesn’t Compress

The key insight in the EvalEval report is about compression ratios. Static benchmarks compress 100–200× because model differences concentrate in small subsets of examples — the ranking survives aggressive subsampling because most examples are not discriminative. You can figure out which model is better without testing them on everything.

Agent benchmarks compress 2–3.5× at best. And training-in-the-loop benchmarks (where the evaluation protocol actually trains models from scratch) resist subsampling entirely because the unit being evaluated is the trained model, not a prediction on a fixed input.

Why don’t agents compress? Because each evaluation item is a multi-turn rollout with its own variance. Each run of an agent through a task involves dozens or hundreds of model calls, tool calls, state updates, and environmental interactions. The expensive object is the trajectory, not the question — and you can’t skip trajectories the way you can skip multiple-choice items.

The numbers make this viscerally clear. On Online Mind2Web, Browser-Use with Claude Sonnet 4 cost $1,577 for 40% accuracy. SeeAct with GPT-5 Medium hit 42% — slightly better — for $171. That’s a 9× cost difference for a 2-percentage-point accuracy difference. The leaderboard that shows you “40% accuracy” is not giving you the denominator.

The Reliability Problem Is Worse Than the Accuracy Problem

So far we’ve been talking about cost per run. But the deeper problem is that a single run is nearly meaningless for agents.

The HAL team documents this well. Agent performance on tau-bench can drop from 60% on a single run to 25% under 8-run consistency testing. That’s not a rounding error — that’s an agent that looks production-ready in a demo and falls apart under moderate repetition. Kapoor et al.’s “AI Agents That Matter” found that 7 of 17 benchmarks in common use had no holdout set, and among the 10 that did, only 5 held out tasks at an appropriate level of generality. Twelve of seventeen benchmarks failed their holdout criterion. The HAL team themselves discovered data leakage in the TAU-bench Few Shot scaffold — agents were passing tasks not because they were capable, but because the evaluation scaffolding was contaminated.

Meanwhile, “a do-nothing agent passes 38% of tau-bench airline tasks.” Thirty-eight percent.

The reliability solution is running evaluations multiple times with multiple seeds, and computing pass^k (the probability that an agent succeeds consistently across k attempts). HAL estimates that a statistically credible k=8 pass would take the $40,000 aggregate to $320,000. Nobody is doing that. Enterprise teams are buying single-seed accuracy numbers and calling it due diligence.

What This Means for Finance and Enterprise AI Governance

This isn’t just a research community problem. Enterprise teams deploying agents in regulated environments — and in finance, almost every AI deployment is a regulated environment — are operating with an accountability gap they have not priced in.

Consider what “model validation” looks like under SR 26-2 for an agentic AI system. You need to demonstrate that the model performs as expected across a representative distribution of inputs, that it is robust to input variation, and that you understand its failure modes. Those requirements, applied honestly to an agent, require something resembling a proper multi-seed evaluation across a range of task types. By the EvalEval Coalition’s accounting, that’s $50,000 to $300,000 in compute costs for a single model under a single task family — before you even get to the operational costs of the evaluation infrastructure itself.

Model risk teams are not budgeting for this. Most are running the equivalent of a smoke test — a handful of tasks, usually designed by the vendor, evaluated once, reported as a percentage. That is not validation; it is theater.

For fraud and compliance systems specifically, where the failure modes of AI agents include both false positives (blocking legitimate transactions) and false negatives (missing actual fraud), the cost of inadequate evaluation shows up as regulatory risk, model risk committee exposure, and — eventually — incident reports. The evaluation shortcuts you take on the way to production become the compliance findings you fight two years later.

The Leaderboard Problem Is Structural

One of the more uncomfortable findings in the EvalEval report is that cost-blind leaderboards are actively misleading. When a leaderboard reports only accuracy and omits cost, researchers can rationally pour tokens into a problem until the number ticks up — even when higher reasoning effort has no reliable accuracy benefit. HAL found that extra inference compute reduces accuracy in the majority of runs tested. More spending did not buy better results; it just cost more.

This creates a perverse dynamic for enterprise buyers. The leaderboard you consult to make vendor decisions rewards waste. The model at the top may have gotten there by spending 10× more per evaluation run than the one two positions below it — and may actually underperform in production where you’re not dumping tokens indiscriminately. The number you’re using for procurement decisions reflects neither cost nor reliability, just peak single-seed performance under unlimited budget conditions.

The CLEAR benchmark analysis found that “accuracy-optimal configurations cost 4.4 to 10.8× more than Pareto-efficient alternatives with comparable real-world performance.” The enterprise buyer paying for accuracy-optimal performance is paying a 4–10× surcharge for performance that won’t materialize in production.

The SuperML Take

The EvalEval Coalition’s report is not primarily a complaint about expensive benchmarks. It’s a structural indictment of how AI accountability works — or doesn’t — at the frontier. If only labs with $40,000+ evaluation budgets can run credible agent assessments, then independent verification of frontier models is effectively impossible for academic institutions, government safety evaluators, and enterprise risk teams. The evaluation apparatus that should be a check on AI deployment becomes the exclusive property of the organizations being checked.

For enterprise teams, this resolves to a practical question: where in your AI deployment lifecycle does the evaluation budget actually live? The honest answer at most organizations is “it doesn’t.” Evaluation is treated as a step in a checklist, not as an infrastructure investment with its own budget line. A team that would never ship backend infrastructure without load testing, chaos engineering, and SLA validation will happily deploy an AI agent with a vendor-provided accuracy metric from a single benchmark run.

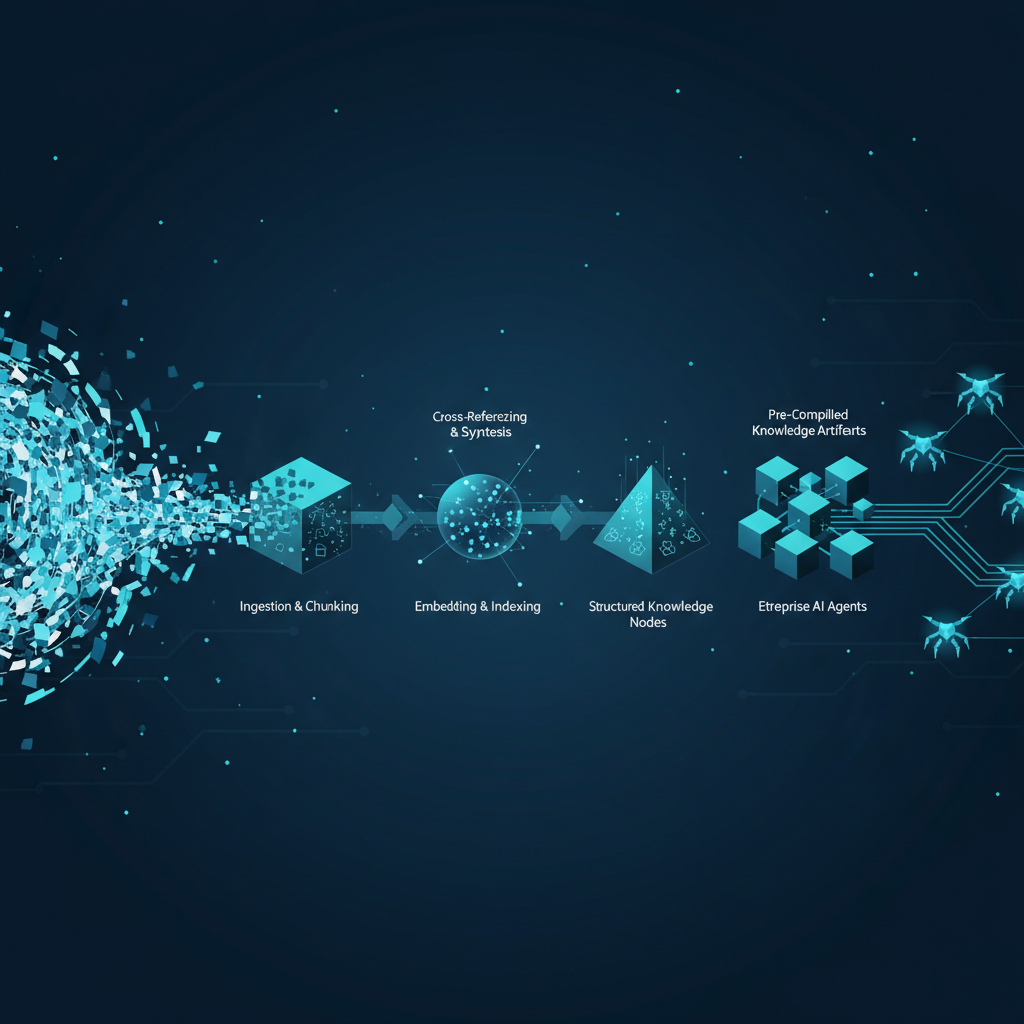

The production-ready version of what the EvalEval report describes is an organization that has separated three distinct things that currently get conflated: capability evals (does the model do the task at all?), reliability evals (does it do the task consistently?), and production evals (does it do the task in our specific environment, with our specific data, under our operational load?). Each of these costs real money and requires real infrastructure. None of them is free because the vendor ran MMLU once.

The gap between the headline and reality in six to twelve months is this: most enterprise agent deployments that look production-ready today will surface reliability problems that were always there but were never measured. The organizations that budget for proper evaluation infrastructure — multi-seed consistency testing, task-distribution holdouts, production-replay eval harnesses — will find and fix these problems before they become incidents. The ones that don’t will find them the other way.

Architecture Impact

What changes in system design? Evaluation must be promoted from a deployment checklist item to a first-class infrastructure component with dedicated compute budget, version-controlled eval harnesses, and SLA tracking. The right analogy is load testing: you don’t run it once before launch and walk away. You run it on every meaningful code change, against representative production traffic distributions, with variance tracked over time. Agent eval infrastructure needs the same treatment — eval harnesses, task libraries, seed management, and per-run cost tracking all need to be owned by the platform team, not improvised at deployment time.

What new failure mode appears? Single-seed eval confidence collapse: a team validates an agent at 65% accuracy on their benchmark, ships it, and discovers it passes only 30% of tasks when measured across 8 runs with actual production input distributions. The failure is not in the agent — it was always unreliable. The failure is in the evaluation protocol that treated one run as ground truth. In regulated environments, this failure mode shows up as a model validation finding: you asserted performance you cannot demonstrate under repeat testing, which is a material deficiency in model documentation under SR 26-2 and EU AI Act Article 13.

What enterprise teams should evaluate:

- Model risk and validation teams: Map current agent eval protocols against pass^k requirements. If you are not running multi-seed consistency checks, your validation does not satisfy the intent of SR 26-2 even if it satisfies the checklist.

- AI platform and MLOps teams: Audit the compute budget allocated to evaluation vs. inference. If eval compute is less than 5% of inference compute for an agentic workload, you are almost certainly under-evaluating. Build eval harnesses that can replay production traffic samples against new model versions before promotion.

- Procurement and vendor management teams: Require benchmark transparency from AI vendors — specifically, the seed count, scaffold configuration, and cost per run behind every accuracy number. A vendor who cannot tell you their eval cost is not running a credible eval.

- Finance and compliance teams: Quantify the regulatory exposure created by inadequate agent validation. The cost of a proper eval harness is a small fraction of the cost of a model risk finding or a regulatory enforcement action.

Cost / latency / governance / reliability implications: Proper agent evaluation infrastructure — multi-seed harnesses, production-replay eval pipelines, task holdout management — will add $50,000 to $300,000 in annual compute costs for teams running significant agentic workloads, depending on model tier and task complexity. This is not optional overhead; it is the cost of actually knowing whether your agent works. On the governance side, organizations cannot demonstrate SR 26-2 compliance or EU AI Act Article 13 transparency for agentic systems without documented, repeatable evaluation protocols — which means the governance budget and the eval infrastructure budget are the same line item.

What to Watch

HAL’s reliability pause: The Holistic Agent Leaderboard has paused new model evaluations to focus on reliability methodology. When they resume, the leaderboard will show pass^k metrics alongside accuracy — which will effectively re-rank every model in use and reveal how much the current rankings are single-seed noise.

The EvalEval Coalition’s “Every Eval Ever” project: This initiative is building shared data infrastructure where eval outputs — not just accuracy numbers, but full trajectory logs and grading traces — can be deposited and reused. If it gets traction, it could reduce duplicate eval spend by 2× or more across the field. Watch whether enterprise vendors start contributing their eval data, or whether this remains an academic exercise.

Regulatory guidance on agent validation: The FCA is expected to provide explicit guidance on audit trails and explainability for AI systems by end of 2026. When that guidance arrives, it will almost certainly require documented, repeatable evaluation protocols that most enterprise teams currently do not have. Model risk teams should treat this as a 12-month runway to build evaluation infrastructure before it becomes a compliance requirement.

Pareto-front leaderboards: The field is slowly moving toward reporting accuracy-vs-cost Pareto frontiers rather than raw accuracy rankings. When a major leaderboard adopts this format, it will change vendor selection conversations — the model that scores 65% for $0.10 per task may look very different from the one that scores 67% for $1.50 per task when you’re running 10 million tasks per month.

Sources

- AI evals are becoming the new compute bottleneck — EvalEval Coalition / HuggingFace

- Holistic Agent Leaderboard (HAL) — Princeton

- AI Agents That Matter — Kapoor et al., arXiv:2407.01502

- Towards a Science of AI Agent Reliability — Rabanser, Kapoor et al., arXiv:2602.16666

- PaperBench: Evaluating AI’s Ability to Replicate AI Research — Starace et al., arXiv:2504.01848

- Efficient Benchmarking of Language Models — Perlitz et al., arXiv:2308.11696

- tinyBenchmarks: evaluating LLMs with fewer examples — Polo et al., arXiv:2402.14992

- The Well: a Large-Scale Collection of Diverse Physics Simulations for ML — Ohana et al., arXiv:2412.00568

- LLM Hallucination Statistics 2026 — SQ Magazine

- AI Benchmarks 2026: Top Evaluations and Their Limits — Kili Technology

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.