The Seven-Model Problem: Enterprise AI Inference Has Left the Lab — and the Control Plane Hasn't Caught Up

F5's 2026 State of Application Strategy Report drops a number that should alarm every platform architect: the average enterprise is now running seven AI models simultaneously in production. The traffic cop that routes between them, governs them, and keeps them from burning your budget? Most enterprises don't have one.

Table of Contents

There’s a number buried in F5’s 2026 State of Application Strategy Report that deserves more attention than it’s gotten. Not the 78% of enterprises now running AI inference in-house — that headline is everywhere. Not even the finding that 77% of organizations have made inference, not model training, their primary AI activity.

The number that should concern every platform architect and AI infrastructure lead is this one: the average enterprise is now operating or actively evaluating seven AI models simultaneously. Seven. And only 28% of those organizations report having a single point of control to manage them all.

Do the math: 72% of enterprises are running a distributed multi-model inference operation with no unified control plane. That’s not a strategic position. That’s a production incident waiting to happen at 2 AM on a Wednesday when the routing table for your customer-facing feature hits a cold model, your fallback invokes the most expensive frontier model available, and your cost anomaly alert doesn’t fire until the damage is done.

This is the seven-model problem. And it’s the AI infrastructure challenge that 2026 has quietly handed every enterprise engineering team that moved faster than their platform team.

From Lab Toy to Operational Tier

The F5 report — which surveyed over 1,100 IT decision-makers globally, published May 5, 2026 — documents a transition that has been building for 18 months but now has hard numbers behind it. Enterprise AI has moved from “we’re experimenting with LLMs” to “inference is core infrastructure” without most organizations redesigning their stack to match the new reality.

The specifics are telling. 78% of organizations now operate their own inference services rather than routing everything through public AI APIs. That’s not surprising in isolation — cost, latency, data governance, and compliance pressure have been pushing enterprises toward self-hosted and private inference for two years. What’s significant is the combination: 78% running in-house inference AND an average of seven models AND 52% actively chaining or orchestrating multiple models in a single workflow. A single user request may now hop between models hosted in entirely different environments — public cloud, private data center, colocation, or edge — before it produces an output.

That’s not a “we deployed an LLM” problem. That’s a distributed systems problem with an AI flavor. And the ops teams now responsible for these stacks are discovering that the tooling they have for single-model, single-cloud deployment wasn’t designed for this.

What “Seven Models” Actually Means in Production

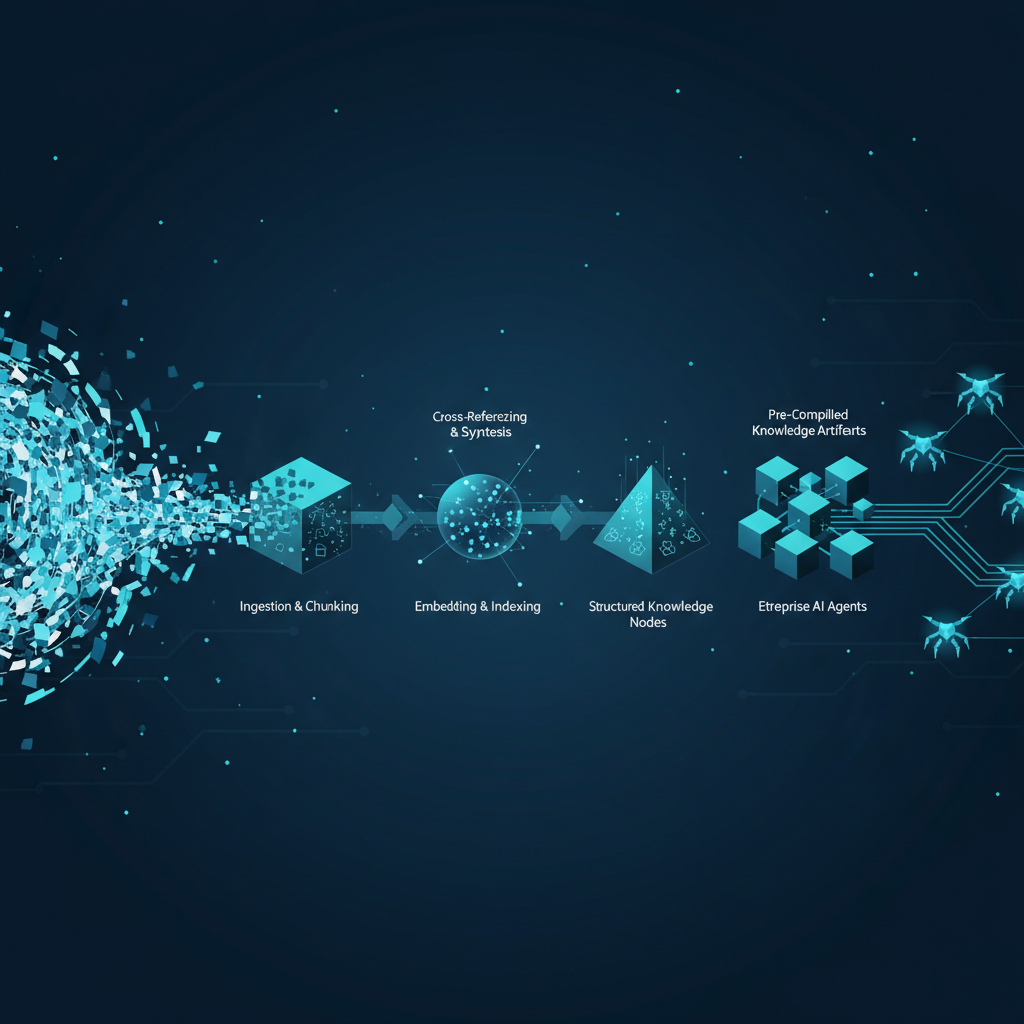

Seven models doesn’t mean seven copies of the same model across environments. In most enterprise deployments, this reflects a differentiated model portfolio that has grown organically — a frontier reasoning model for complex workflows, a mid-tier model for content generation, a smaller specialized model for classification or routing decisions, a fine-tuned domain model for compliance or legal, an embedding model for RAG, a vision model for document processing, and a legacy model that’s technically “in evaluation” but still receiving production traffic because the migration never quite finished.

Each of those models has different latency profiles, pricing structures, rate limits, failure modes, and output distributions. Chaining them — as 52% of enterprises now do — introduces inter-model dependencies that create cascading failure scenarios invisible to any single model’s monitoring. If your routing agent decides to invoke a high-latency reasoning model mid-chain because the classification model returned low confidence, your overall response time budget just blew up, and you probably won’t see it until users start complaining.

The routing logic that governs which model gets which request is, in most enterprises today, embedded directly in application code, scattered across multiple teams, and completely opaque to the platform team that owns inference infrastructure. That’s the exact configuration that produces both runaway costs and undetectable quality degradation.

The Control Plane Gap

F5’s 72% figure — the share of enterprises without a unified AI management point — captures something real about where the industry is. The tooling for multi-model inference control planes is still maturing. LLM gateways like LiteLLM, Portkey, and Kong AI Gateway exist, but enterprise adoption at scale is still early. The patterns for centralized AI routing — cost-aware model selection, semantic caching, circuit breaking for degraded models, unified audit logging across providers — are well-understood in theory and inconsistently implemented in practice.

What a production-grade inference control plane actually needs to do is non-trivial. It has to route traffic based on a policy that accounts for cost per token, latency SLOs, model availability, compliance scope (some models can’t see certain data), and output quality signals. It has to log every inference call in a way that’s auditable for regulatory purposes. It has to enforce rate limits and fallback chains when a model degrades or becomes unavailable. And it has to do all of this without adding enough latency to make it the bottleneck in the very workflows it’s supposed to optimize.

Most enterprises in 2026 have none of this, a fragment of this, or a homegrown version that two engineers understand and nobody has tested under load.

Why This Compounds in Agentic Workflows

The multi-model routing problem becomes significantly harder when you add agentic orchestration — and that’s exactly where enterprise AI is heading. When a single agent turn may invoke a planner model, a tool-selection model, a domain-specific execution model, and a critic model for output validation, your inference infrastructure is now the operational substrate for autonomous decision-making.

The failure modes in this scenario aren’t just “the model returned a bad answer.” They’re “the routing decision at step two sent a request to an uncensored model that doesn’t enforce your data handling policy” and “the fallback chain invoked a frontier model 400 times in 90 seconds because the cost gate wasn’t configured for agentic retry loops” and “the audit trail for a compliance-sensitive action is spread across four different model provider logs that nobody correlated.”

These are production reliability problems, compliance problems, and cost governance problems all at once. They don’t show up in LLM evaluations. They show up in post-incident reviews.

The SuperML Take

Here’s what the F5 report is actually documenting: the enterprise AI stack has bifurcated into a fast-moving application layer and a critically under-invested infrastructure layer, and the gap between them is widening. The application teams building agentic workflows and multi-model chains are shipping features faster than the platform teams can build the control surfaces to govern them. That’s not a criticism of either team — it’s a structural consequence of how enterprise AI adoption has happened.

The press-release version of this story is “enterprises are going all-in on AI inference.” The production version is “enterprises are running distributed inference operations with the operational maturity of a late-stage proof of concept.”

What the 28% figure tells you is that the enterprises with unified control planes have a structural advantage that compounds over time. Not because their AI models are better, but because they can see what’s happening, respond to cost spikes, enforce policies, and iterate on routing logic without touching application code. That’s the difference between inference as infrastructure and inference as chaos.

Senior platform engineers and AI architects should treat the multi-model control plane as a first-class infrastructure component — not as something that gets bolted on after the models are running. The organizations that design their inference tier with routing, observability, cost governance, and policy enforcement as core requirements will spend the next 12 to 18 months iterating on performance. The ones that don’t will spend that time debugging incidents they can’t reproduce.

The honest assessment of where the tooling stands: the open-source options are functional but operationally immature for enterprises running seven-model portfolios with strict compliance requirements. The commercial options (TrueFoundry, Portkey Enterprise, F5 AI Gateway) are solving the right problems but require architectural commitment and budget that many teams haven’t gotten approval for yet. In the meantime, the practical path for most enterprises is to consolidate routing logic into a thin proxy layer, centralize logging to a single observability backend, and treat cost governance as a routing policy problem rather than a finance review problem.

Architecture Impact

What changes in system design? Multi-model inference forces a fundamental shift from “deploy a model” to “design an inference tier.” Every architectural decision about where models live, how requests are routed between them, and how their outputs are composed now needs to be made explicitly rather than embedded in individual services. The inference tier becomes its own layer in the stack with its own reliability, latency, and cost requirements — analogous to how the data tier matured from “connect to a database” to a managed, observable, policy-enforced infrastructure component.

What new failure mode appears? The dominant new failure mode is silent cost explosion through uncontrolled model selection in agentic retry loops. When an orchestrator retries a failed tool call by escalating to a more capable (and more expensive) model, and no circuit breaker enforces a cost ceiling per workflow, a single runaway agent session can accumulate thousands of high-cost inference calls before any alerting fires. The second failure mode is compliance scope leak — requests that contain regulated data being routed to models outside the approved provider list because the routing logic doesn’t enforce data classification at the inference layer.

What enterprise teams should evaluate:

- Platform / infrastructure teams: Whether your current inference setup has a single point where routing policies can be defined, updated, and enforced without application code changes — if not, building or procuring that control plane is the highest-leverage infrastructure investment right now.

- ML engineering teams: Whether your model portfolio decisions are driven by performance benchmarks alone or whether cost-per-token at your actual request volume, latency under load, and fallback behavior under degradation are also part of the evaluation criteria.

- Security and compliance teams: Whether your data handling policies are enforced at the inference routing layer or whether they depend on application-level controls that may not hold as more teams build AI features autonomously.

Cost / latency / governance / reliability implications: Enterprises operating seven models without centralized routing are typically experiencing 20–40% cost overrun relative to what an optimized routing policy would achieve, according to inference cost analysis from Q1 2026. The primary driver is frontier model overuse — requests that a mid-tier model could handle at $0.002/1K tokens are being routed to frontier models at $0.015–0.030/1K tokens because the routing decision defaults to “best available.” At 50M inference requests per month — a moderate enterprise volume — that gap compounds to six-figure monthly waste. On the latency side, multi-model chains without circuit breakers frequently experience P99 latency spikes 10–20x above median because a single slow model in the chain propagates the delay to every downstream step.

What to Watch

The inference control plane tooling market is consolidating fast. Kong, F5, and TrueFoundry are all making aggressive moves to own this layer in the enterprise stack. The open-source ecosystem — LiteLLM, OpenLLM, and the emerging set of OpenTelemetry-native inference proxies — is maturing but still requires significant operational investment to run at enterprise scale. Expect the next 6–9 months to produce clearer winners in the AI gateway/inference control plane category, with compliance-focused enterprises (financial services, healthcare, regulated industrials) driving procurement decisions based on audit trail depth and data handling certifications rather than pure performance benchmarks.

The deeper trend to track is whether the inference control plane absorbs agentic orchestration responsibilities or remains a pure infrastructure component. If routing decisions start incorporating agent state, workflow context, and multi-turn history, the control plane becomes indistinguishable from the orchestration layer — and that’s a significant architectural and vendor dependency question that enterprise teams should resolve intentionally rather than by accident.

Sources

- F5 2026 State of Application Strategy Report — AI Has Left the Lab (BusinessWire)

- Multi-model AI is creating a routing headache for enterprises (Help Net Security)

- F5 Report 2026: AI inferencing has arrived, complicating an already complex IT landscape (F5 Blog)

- AI inferencing will define 2026, and the market’s wide open (SDxCentral)

- Top 5 Enterprise AI Gateways in 2026 (DEV Community)

- Inference Optimization: The Defining LLM Infrastructure Shift for 2026 (Dev|Journal)

- F5 report shows enterprises bringing AI inference in-house (RCR Wireless)

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.