The Cognitive Architecture Revolution: EMBER, GPT-5.4, and Why AI's Next Leap Isn't About Scale

From hybrid spiking-neural-network agents to GPT-5.4's unified reasoning-plus-coding brain, the cutting edge of AI has decisively shifted from 'bigger' to 'smarter.'

Table of Contents

For two years, the dominant story in AI was deceptively simple: scale up the transformer, collect more data, pour in more compute, and the model gets smarter. That story isn’t wrong — but it’s increasingly incomplete. In April 2026, the most exciting research isn’t about the biggest models. It’s about the most cognitively sophisticated ones: hybrid architectures that marry neural networks with symbolic reasoning, models that remember and learn continuously, and agents that simulate the world before acting in it. This week delivered a cluster of developments that together signal a genuine architectural inflection point.

GPT-5.4: The First Unified Brain

OpenAI’s March 2026 release of GPT-5.4 might be the cleanest sign of where flagship models are heading. For most of the GPT-5 era, OpenAI maintained a split: general reasoning models on one track, specialist coding models (the Codex lineage) on another. GPT-5.4 ends that separation. It is the first mainline reasoning model to directly incorporate the frontier coding capabilities of GPT-5.3-Codex, ships natively with computer-use capabilities, supports a one-million-token context window, and introduces a tool-search mechanism that reduces token costs by 47% in tool-heavy agentic workflows — without measurable accuracy loss.

The benchmarks reflect the merger: 57.7% on SWE-bench Pro for software engineering tasks, 75% on OSWorld (computer use), and 83% on GDPval for knowledge-work tasks. It ranks in the top five across over 100 models on aggregate coding and programming benchmarks.

What matters here isn’t the raw numbers. It’s the architecture philosophy. Keeping reasoning and code generation separate made sense when each required fundamentally different training regimes. That assumption is breaking down. As world models and agentic tasks increasingly require a model to reason about code and execute it and interact with a GUI and maintain long-horizon context simultaneously, unified systems will outperform specialized ones that have to hand off between components. GPT-5.4 is the first production example of that bet paying off.

Meanwhile, the separately deployed GPT-5.3-Codex-Spark — a smaller model tuned for real-time coding — delivers over 1,000 tokens per second for latency-sensitive tasks. This bifurcation (a powerful unified flagship plus a fast coding-specialized variant) suggests the industry is converging on a hub-and-spoke model architecture: one large general-purpose cognitive engine, surrounded by specialist accelerators for bottleneck tasks.

EMBER: When a Spiking Neural Network Becomes the Agent’s Memory

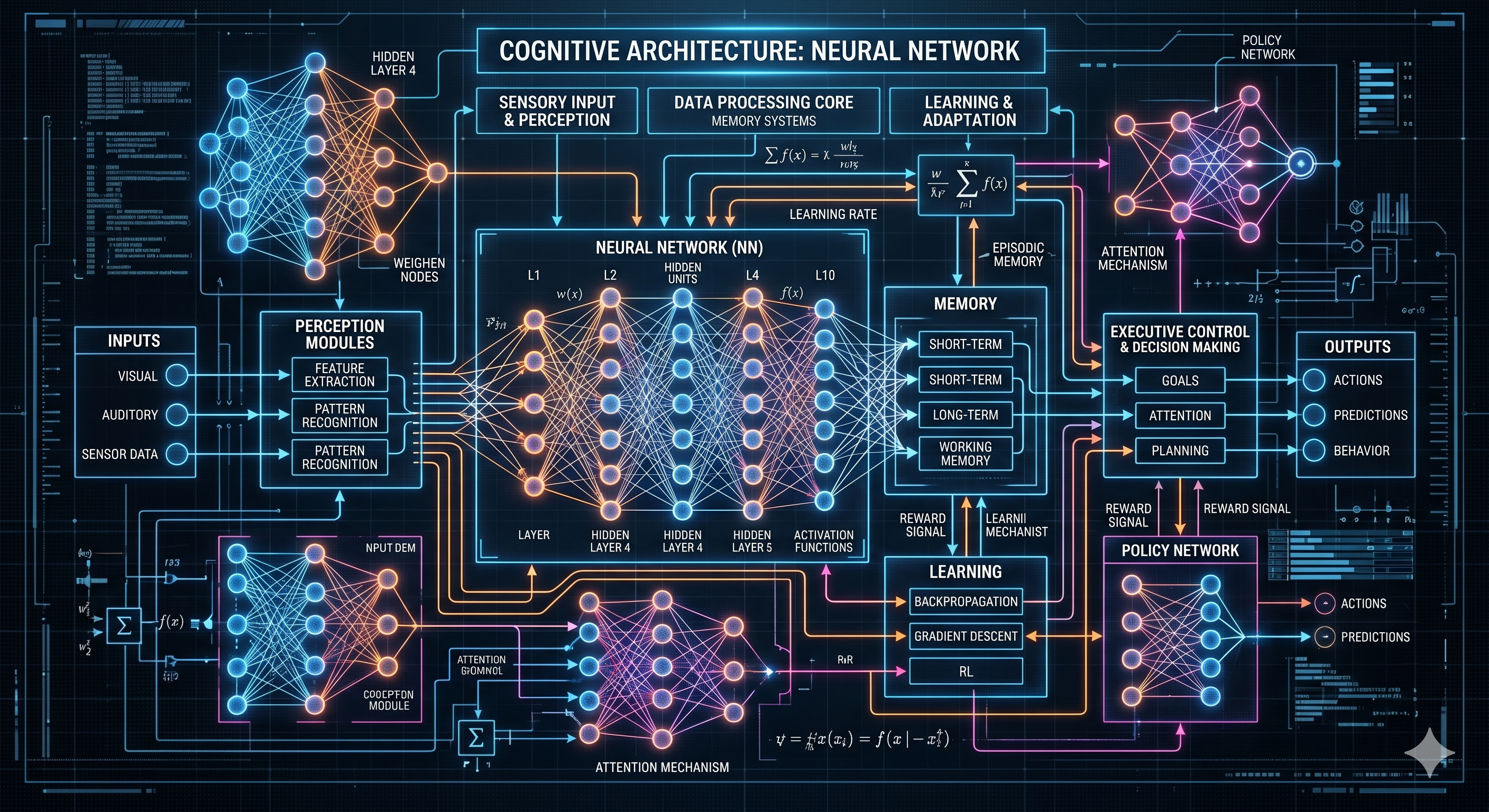

Across the research side, the most conceptually bold paper this week comes from arxiv: EMBER, short for Experience-Modulated Biologically-inspired Emergent Reasoning. The paper proposes a hybrid cognitive architecture that wraps a large language model inside a persistent, biologically-grounded associative memory substrate — specifically, a 220,000-neuron spiking neural network (SNN).

Standard transformer LLMs have no persistent memory between calls. Their “context window” is a fixed-size working memory that disappears when the session ends. EMBER’s SNN acts as a biological long-term memory layer: it uses spike-timing-dependent plasticity (STDP) to strengthen or weaken synaptic connections based on experience, maintains a four-layer hierarchical organization, includes inhibitory excitatory/inhibitory (E/I) balance, and applies reward-modulated learning to update what it retains.

The result is an agent that doesn’t just process the current prompt — it has a continuously updating world model built from accumulated experiences, encoded in spike patterns rather than token sequences. The LLM handles reasoning and language generation; the SNN handles temporal memory, associative recall, and the kind of rapid, low-energy signal processing that biological brains use for pattern recognition.

Why does this matter? Current LLM agents simulate memory by appending summaries to their context window — an expensive, lossy approximation. EMBER-style architectures point toward genuine episodic memory: the agent encodes the fact that it tried approach X last week, it failed, and approach Y worked better. That kind of experiential grounding is what separates a competent assistant from a consistently reliable one.

Practically, spiking neural networks also consume far less energy than dense matrix multiplications. Intel’s Loihi 2 chip and IBM’s NorthPole architecture are already demonstrating that SNN inference on neuromorphic hardware runs at a fraction of the energy cost of GPU-based transformer inference. EMBER is early-stage research, but it connects two trends that had previously been developing in isolation: cognitive agent architectures and neuromorphic hardware efficiency.

Neurosymbolic Reasoning Gets an Enterprise Architecture

A second arxiv paper this week takes a different approach to the same underlying problem: how do you make AI agents that reason reliably within constrained, regulated domains? The paper introduces FAOS — Foundation AgenticOS — a neurosymbolic architecture that grounds LLM reasoning inside explicit ontology constraints.

The core insight is that enterprise AI failures often aren’t capability failures — they’re grounding failures. An agent might know how to reason about financial compliance in general but doesn’t know the specific rules, roles, and relationships that govern your organization’s particular regulatory context. FAOS addresses this by coupling the neural reasoning layer (the LLM) to a symbolic ontology layer that encodes domain structure: the permitted actions for each role, the regulatory constraints for each document type, the relationship between entities in the business’s knowledge graph.

In experiments across regulated industries, ontology-coupled agents significantly outperformed ungrounded agents on metric accuracy, regulatory compliance, and role consistency. The gains were largest precisely where ungrounded agents tended to hallucinate or drift: edge cases, ambiguous jurisdiction questions, and multi-step compliance chains.

This aligns with a striking prediction from Gartner: over 40% of agentic AI projects will be canceled by 2027, not because the models aren’t capable enough, but because the systems lack adequate risk controls and explainability. FAOS-style neurosymbolic grounding is one credible answer to that problem. Enterprises in finance, healthcare, and legal sectors need AI agents that don’t just produce correct outputs on average — they need agents whose reasoning can be audited, explained, and constrained to defined business boundaries.

EY’s recent deployment of enterprise-scale agentic AI in audit (the EY Canvas platform) represents this trend moving from research to production. The system is designed to support end-to-end audit activities globally, combining neural pattern recognition with symbolic audit rules to produce decisions that auditors can review and defend.

The World Model Moment

Underlying all of these developments is a shared architectural bet: that the next generation of AI systems needs world models — internal representations of how the environment works — rather than just pattern-matching over training data.

A world model lets an agent simulate likely futures before committing to an action. It lets a robot understand that pushing a box off a shelf will cause it to fall, without having to have seen exactly that sequence during training. It lets a coding agent reason about what a refactored function will do to the rest of the codebase, not just whether it compiles. And it lets a financial agent understand the second-order consequences of an investment decision, not just its immediate expected return.

2026 is shaping up to be the year world models move from a compelling research direction to a practical engineering priority. DeepMind CEO Demis Hassabis has repeatedly identified world models as one of the central unsolved problems separating current AI from genuinely general intelligence. The EMBER architecture’s SNN memory layer is one approach to building persistent world state. FAOS’s ontology layer is another — more symbolic, less biological, but serving the same purpose of grounding the agent in a reliable model of its operating environment.

Continual learning — the ability to update a model’s knowledge from new experience without catastrophic forgetting — is the necessary complement. Current models are retrained periodically on static snapshots of the world. A model with genuine world-model capabilities needs to update that model in near-real-time. Neuromorphic architectures are particularly promising here: their sparse, event-driven computation is naturally suited to incremental updates rather than full retraining passes.

What This Week’s Developments Tell Us

Read together, the GPT-5.4 release, the EMBER and FAOS papers, and the broader research turn toward world models and continual learning sketch a coherent picture of where AI architecture is heading:

The dominant trend for the next 12–18 months won’t be “largest parameter count wins.” It will be “best cognitive architecture wins.” That means models with persistent, updatable memory. Models whose reasoning is grounded in explicit symbolic constraints, not just statistical patterns. Models that simulate futures rather than just interpolating from past training data. And models that can run efficiently on heterogeneous hardware — powerful GPUs for complex reasoning, neuromorphic chips for memory and fast pattern recognition — rather than requiring massive data center capacity for every inference.

The companies and research groups that get this architecture right will build systems that are not just more capable, but more reliable, more interpretable, and more deployable in the high-stakes domains — medicine, law, finance, infrastructure — where AI has so far struggled to earn full trust.

What to Watch

Watch for OpenAI’s forthcoming GPT-6 release (pre-training completed March 24; release expected within weeks) to reveal whether the unified-brain approach of GPT-5.4 scales further, and whether memory and world-model features appear as first-class capabilities in the base model rather than add-ons. Watch for EMBER-style hybrid architectures to show up in agentic frameworks over the next quarter as neuromorphic hardware becomes more accessible. Track Gartner’s agentic AI cancellation metric as a real-world signal for whether neurosymbolic grounding solutions like FAOS are being adopted fast enough to save projects in regulated industries. And keep an eye on Intel Loihi 2 and IBM NorthPole deployment stories — when neuromorphic hardware shows up in production workloads, it will likely be paired with the cognitive architectures being developed in research today.

The big-model era isn’t over. But the big-architecture era has begun.

Related Reading

- The Agent Stack Grows Up: Opus 4.7, MCP Becomes a Standard, and a $50B Infrastructure Bet

- GPT-5.5, Google’s 8th-Gen TPU, and Why AI Is Finally Learning to Say ‘I’m Not Sure’

- State of AI 2026: Benchmarks Near Perfect, Transparency at an All-Time Low, and GPT-6 on the Horizon

Sources

- GPT-5.4 Complete Guide 2026: Features, Pricing, Benchmarks — NxCode

- Introducing GPT-5.4 — OpenAI

- GPT-5.4 Benchmarks 2026: Scores, Rankings & Performance — BenchLM.ai

- EMBER: Autonomous Cognitive Behaviour from Learned Spiking Neural Network Dynamics in a Hybrid LLM Architecture — arXiv:2604.12167

- Ontology-Constrained Neural Reasoning in Enterprise Agentic Systems — arXiv:2604.00555

- Neurosymbolic AI: The Architecture Behind Safe, Scalable Enterprise Agentic Automation — Skan AI

- EY launches enterprise-scale agentic AI for audit — EY Global

- 2026 is Breakthrough Year for Reliable AI World Models and Continual Learning Prototypes — NextBigFuture

- The AI Research Landscape in 2026: From Agentic AI to Embodiment — Adaline Labs

- Neuromorphic Computing 2026: The Brain in a Chip — AI Tech Boss

- From LLM Reasoning to Autonomous AI Agents: A Comprehensive Review — arXiv:2504.19678

Enterprise AI Architecture

Want more enterprise AI architecture breakdowns?

Subscribe to SuperML.